The Crawlability-to-Rankings Gap: Why Google Understands Your Pages But Won't Rank Them

Google Search Console's page indexing report marks a URL as "Submitted and indexed," and most site owners interpret that green status as mission accomplished.

The Crawlability-to-Rankings Gap: Why Google Understands Your Pages But Won't Rank Them

Google Search Console's page indexing report marks a URL as "Submitted and indexed," and most site owners interpret that green status as mission accomplished. For a 47-location regional HVAC company I audited earlier this year, 1,800 of their 2,100 indexed pages generated exactly zero organic clicks over a rolling 90-day window. The pages were crawled, parsed, and stored in Google's index. They were also functionally invisible in search results.

This is the crawlability vs rankability gap, and it's one of the most common technical SEO foundation gaps I encounter when working with local and multi-location businesses. The misunderstanding it corrects is simple but consequential: being indexed is eligibility, not placement. Your page being in Google's index means it can appear in results. It says nothing about whether it will. As one analysis of indexing status puts it, "indexing status indicates that the page is eligible to rank in SERPs, but doesn't necessarily mean that the page is currently ranking."

The mechanism that separates indexed-but-invisible pages from pages that actually pull traffic has at least four distinct layers. Each one can independently block a well-crawled page from earning rankings, and local businesses tend to trip over all four simultaneously.

The Four-Stage Pipeline Google Runs on Every URL

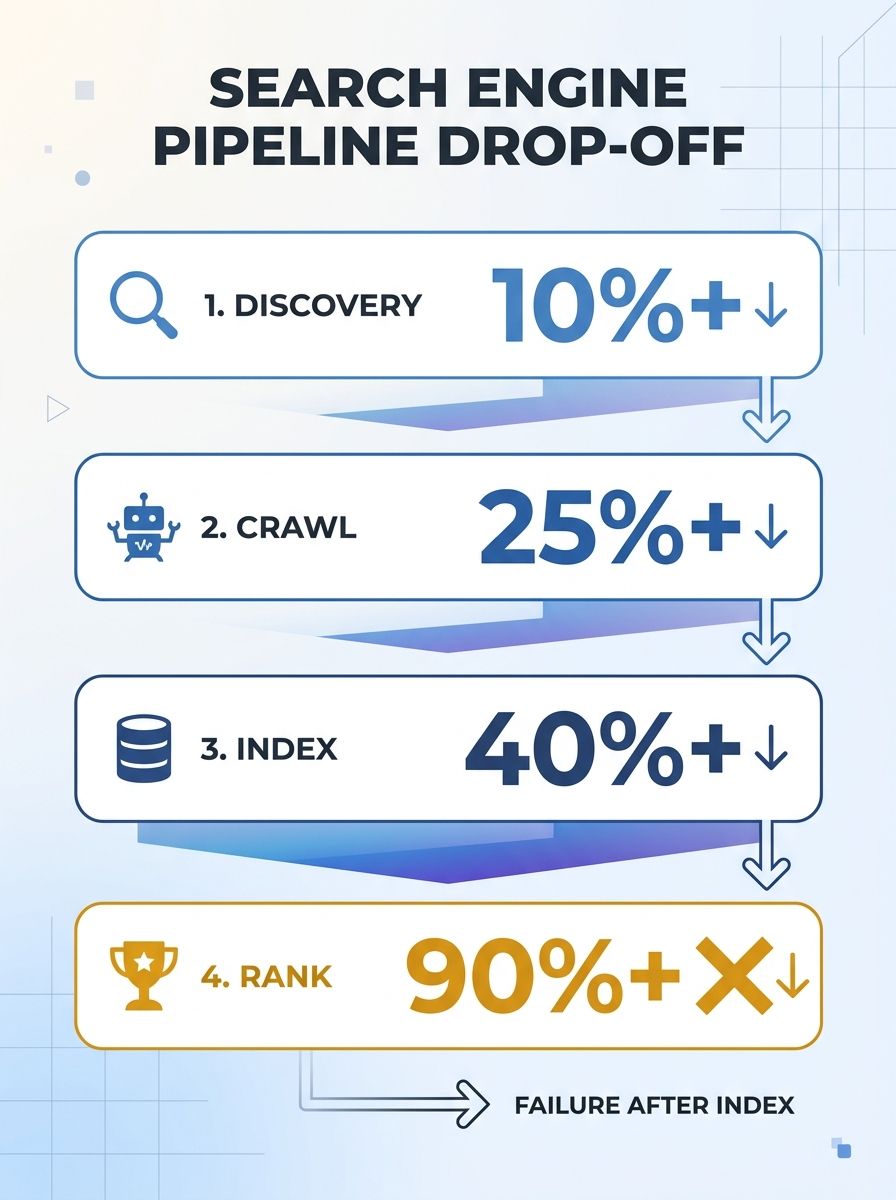

Before a page can rank for anything, it passes through a sequential process: discovery, crawl, indexation, and ranking evaluation. Each stage has its own failure modes.

Discovery happens when Googlebot finds a URL through a sitemap, an internal link, or an external link. For local businesses with separate location pages, discovery problems are rare because these pages usually exist in the primary navigation.

Crawling is the stage where Googlebot actually requests and downloads the page content. Crawl budget matters here. Google allocates crawl resources based on two factors: crawl rate (how fast your server can handle requests without degrading) and crawl demand (how much Google "wants" your content based on popularity, freshness, and authority). A multi-location business with hundreds of thin location pages can burn through crawl budget on low-value URLs while critical service pages wait in queue.

Indexation means Google has processed the page, rendered its JavaScript, and stored a representation of it. This is where most people stop paying attention. The green "Indexed" checkmark in Search Console feels like a finish line.

Ranking evaluation is where 200+ signals determine whether the page deserves to appear for any given query. This is where the gap lives. A page can sail through stages one through three and stall completely at stage four.

If you've already confirmed your pages are being crawled properly, the question shifts from "Can Google find me?" to "Why won't Google show me?" That shift is where the real diagnostic work for Google indexing but not ranking begins.

Where Local Pages Fail the Intent Filter

Google's ranking system evaluates whether a page satisfies the specific intent behind a query better than every other indexed alternative. For local businesses, this filter is brutally competitive because Google has very specific expectations about what local intent pages should look like.

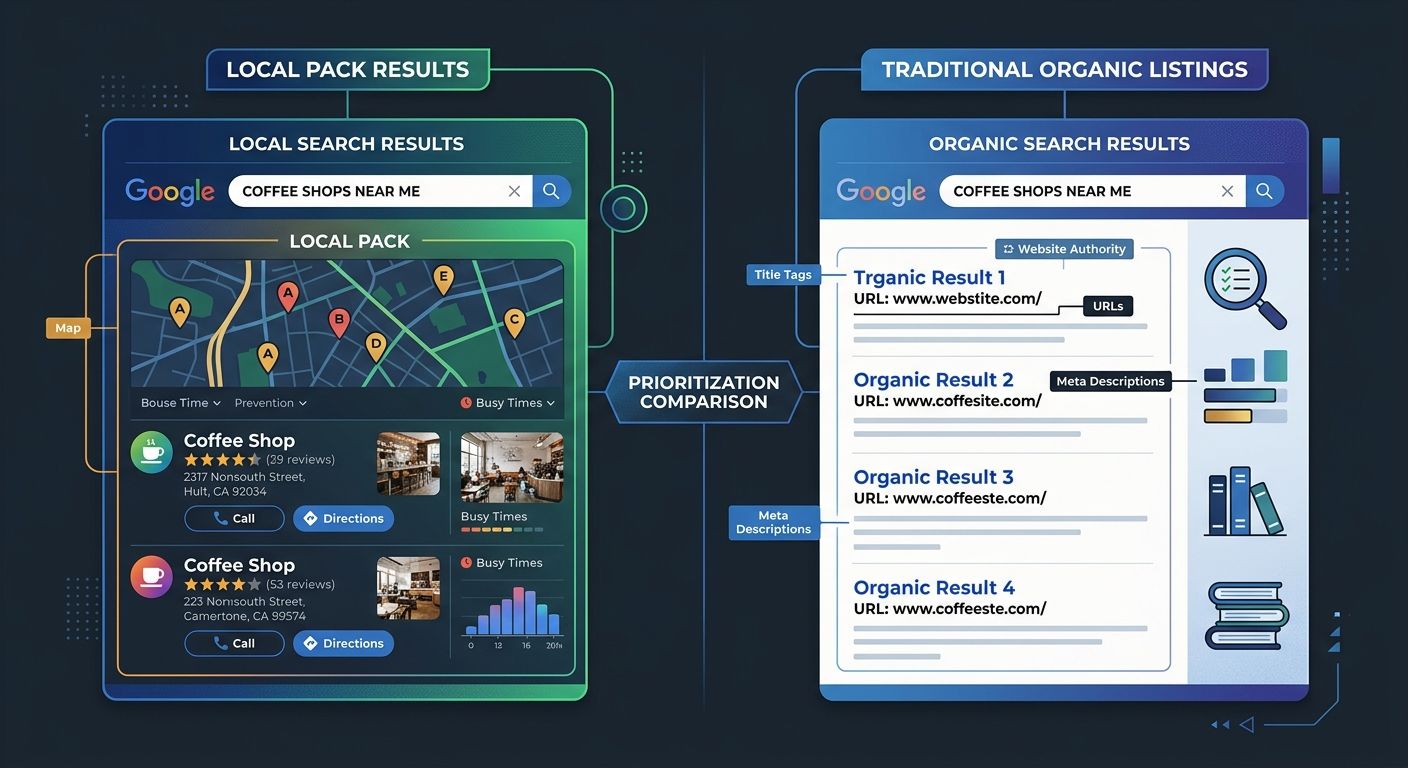

Consider a query like "emergency plumber near me." Google's AI systems have learned from billions of interactions that people searching this phrase want a Google Business Profile listing with reviews, hours, and a phone number. They want a local pack, not a blog post. If your /emergency-plumbing-services/ page is a 600-word content piece about when to call an emergency plumber, it's answering the wrong question. The page is indexed. The intent match is poor. Rankings stay flat.

I've written before about how to diagnose intent mismatches in your top-ranking pages, and the framework applies doubly to local service pages. Pull up the actual SERP for every keyword you're targeting and categorize what Google is showing: local pack results, informational content, transactional landing pages, or some combination. If your page format doesn't match what dominates position one through five, indexation won't save you.

Google's own documentation acknowledges this indirectly, noting that search results are customized for each user's search history, location, and many other variables. A page indexed in Mountain View might never surface for a user in Tampa if the content doesn't align with how Google interprets Tampa-specific search intent.

For multi-location businesses, this means a single template stamped across 50 city pages with swapped location names will get indexed and fail the intent filter simultaneously. Google can tell when the content is substantively identical, and it has no reason to rank all 50 versions.

Authority Signals and the Local Trust Problem

Even when a page matches search intent perfectly, it still needs enough authority to outrank competitors. Site authority signals in local SEO come from a combination of sources that most local businesses underinvest in.

Link equity remains foundational. As Search Engine Land's analysis of the evolving authority model points out, "links were always just one signal. Now search engines can understand dozens more." But for local businesses, links provide the crawl paths and topical relevance that form the baseline. A local roofing company with zero backlinks from local news outlets, trade associations, or community organizations is competing with an empty hand.

Brand signals have grown in importance through the February 2026 Discover Core Update, which prioritized original, expert-driven content and explicit E-E-A-T markers. For local businesses, this translates into concrete requirements:

Author pages with credentials tied to real people at the business

Google Business Profile completeness and review velocity

Citations in local directories that match NAP (name, address, phone) data exactly

Coverage or mentions in local publications

I've seen local medical practices jump from page three to the local pack within eight weeks by doing nothing technical at all. They added physician bios with board certifications, linked those bios from every service page, and asked patients to leave Google reviews. The technical foundation was already there. The missing piece was trust signals that Google uses to evaluate authority beyond raw link counts.

How Internal Architecture Becomes a Ranking Input

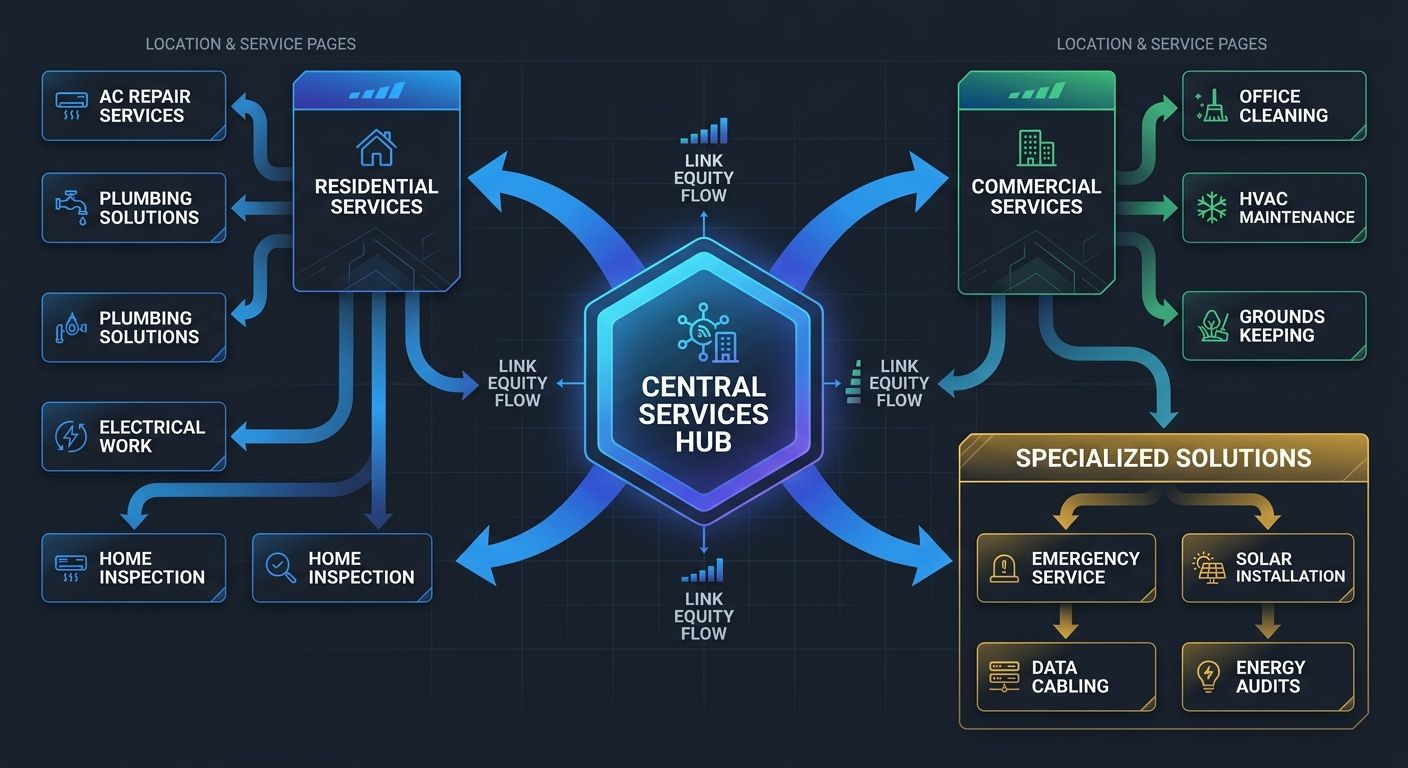

Internal linking structure is the mechanism through which your site communicates topical relationships and page importance to Google. For local businesses, broken internal architecture is one of the most common reasons for the crawlability-to-rankings gap.

Here's how it works mechanically. Every internal link passes a fraction of the linking page's authority to the destination page. When your homepage (typically your highest-authority page) links to /services/ which links to /services/plumbing/ which links to /services/plumbing/drain-cleaning/, each hop dilutes the signal. If your most important revenue-generating service page sits four clicks deep with only one internal link pointing to it, you've told Google it's unimportant.

Moz's documentation on domain authority confirms this pattern: internal links guide users to relevant content and distribute link equity across your site, directly supporting both crawlability and ranking potential. A clear linking strategy improves how Google understands the hierarchy of your pages.

For multi-location businesses, the architecture problem compounds. I audited a 30-location dental group where each location had its own subfolder with duplicate service pages. That's 30 versions of "teeth whitening" competing with each other internally, none receiving concentrated link equity. When we restructured their content architecture to signal topic authority properly, consolidated to single service pages with location-specific sections, and built hub pages for each metro area, organic traffic to service pages increased 140% over four months. The technical crawlability hadn't changed at all. Every page had been indexed the entire time.

The lesson: if your site architecture doesn't signal topical authority to Google, you can have perfect crawlability and still watch your pages gather dust in the index.

AI Retrieval Adds a Fifth Layer

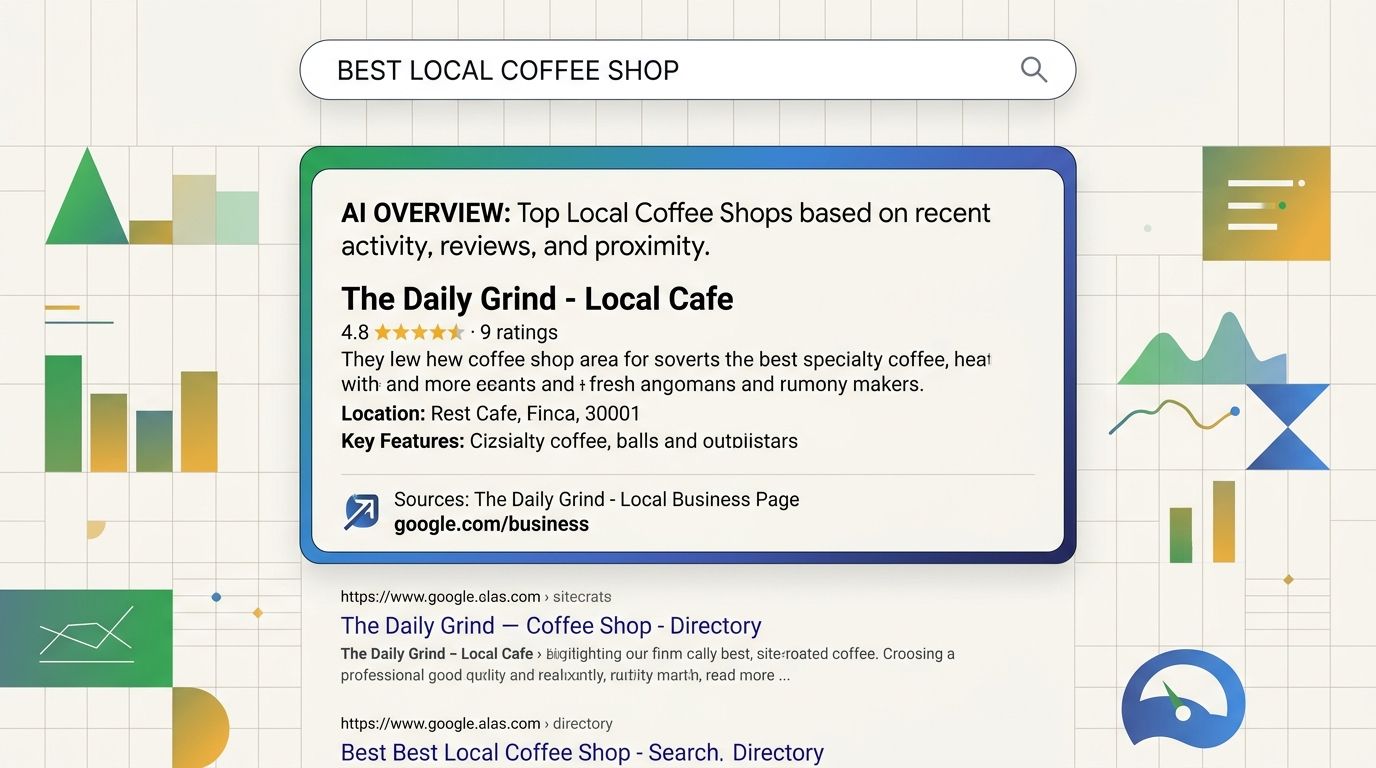

The ranking mechanism now includes a layer that didn't exist two years ago: eligibility for AI-generated summaries. Google's AI Overviews pull from indexed pages and synthesize answers directly on the SERP, often above all organic listings.

For local businesses, this layer introduces a specific structural requirement. AI Overviews extract information from well-defined content chunks. Pages with clear heading hierarchies (H1 through H3), concise answers near the top of sections, and structured data markup are more likely to be cited as sources. Pages with long, unbroken prose blocks or content buried behind JavaScript interactions get skipped.

Server logs reveal another dimension of this shift. AI crawlers from ChatGPT, Claude, and Perplexity now request pages independently of Googlebot, but they don't execute client-side JavaScript. If your local business site renders key content through JavaScript frameworks, these AI systems see an empty shell. Your page is technically indexed by Google but invisible to the AI retrieval layer that increasingly determines what searchers see first.

The practical impact for local businesses: a competitor's page that clearly answers "What does emergency HVAC repair cost in Phoenix?" with a structured, schema-marked section will get cited in an AI Overview, while your longer, more thorough page gets bypassed because the answer isn't extractable.

If you're already tracking how AI search systems interact with your content differently, you've got a head start. If you're still relying solely on traditional rank tracking, you're measuring half the picture.

Where the Model Breaks

This four-layer model explains the majority of cases where crawled-and-indexed pages fail to rank. But it doesn't explain everything.

Google's own inconsistencies create ranking gaps that no amount of optimization can close. The March 2026 Spam Update suppressed pages that Google's classifiers flagged as low-effort AI content, even when some of those pages were hand-written, well-structured, and genuinely useful. If your pages got caught in a false positive, the problem isn't your mechanism understanding. It's algorithmic overcorrection.

Hyper-local competition can also make the model misleading. In dense metro areas, Google sometimes shows a local pack dominated by businesses with 500+ reviews and years of citation history. A new business with perfect technical SEO, strong content, and solid architecture still won't displace an established competitor overnight. Authority accumulation has a time component that no technical fix accelerates.

Personalization effects make ranking position inconsistent across users. Google confirms that search results vary by location, search history, and other user-specific variables. A page might rank position three for one user in your target zip code and position 30 for another. If you're checking rankings from your own browser after months of visiting your own site, you're seeing a distorted view.

The crawlability-to-rankings gap is real, and understanding the mechanism gives you a diagnostic framework that moves beyond "make sure Google can crawl your pages." But the mechanism has boundaries. Sometimes a page is indexed, optimized, authoritative, well-structured, and intent-matched, and it still doesn't rank because a competitor simply has more accumulated trust. The model identifies the layers where you can intervene. It can't guarantee that intervention will be enough, especially in competitive local markets where the top positions were earned over years, not quarters.

Sarah Chen

SEO strategist and web analytics expert with over 10 years of experience helping businesses improve their organic search visibility. Sarah covers keyword tracking, site audits, and data-driven growth strategies.

Explore more topics