The AI SEO Tool Blindspot: Why Your Traditional Platform Can't Track Answer Engine Results

Semrush, Ahrefs, and Moz pull ranking data by scraping search engine results pages.

The AI SEO Tool Blindspot: Why Your Traditional Platform Can't Track Answer Engine Results

Semrush, Ahrefs, and Moz pull ranking data by scraping search engine results pages. That approach has been the foundation of SEO measurement for over a decade, and it's the exact reason these platforms can't tell you whether ChatGPT, Perplexity, or Google's AI Overviews are recommending your brand to potential customers. The mechanism they rely on was built for a world where search results were a list of ten blue links. Answer engines produce something structurally different, and the mismatch between these two architectures creates AI search visibility gaps that most marketing teams don't realize exist until traffic starts dropping without any change in their keyword rankings.

How Traditional SEO Tools Actually Collect Data

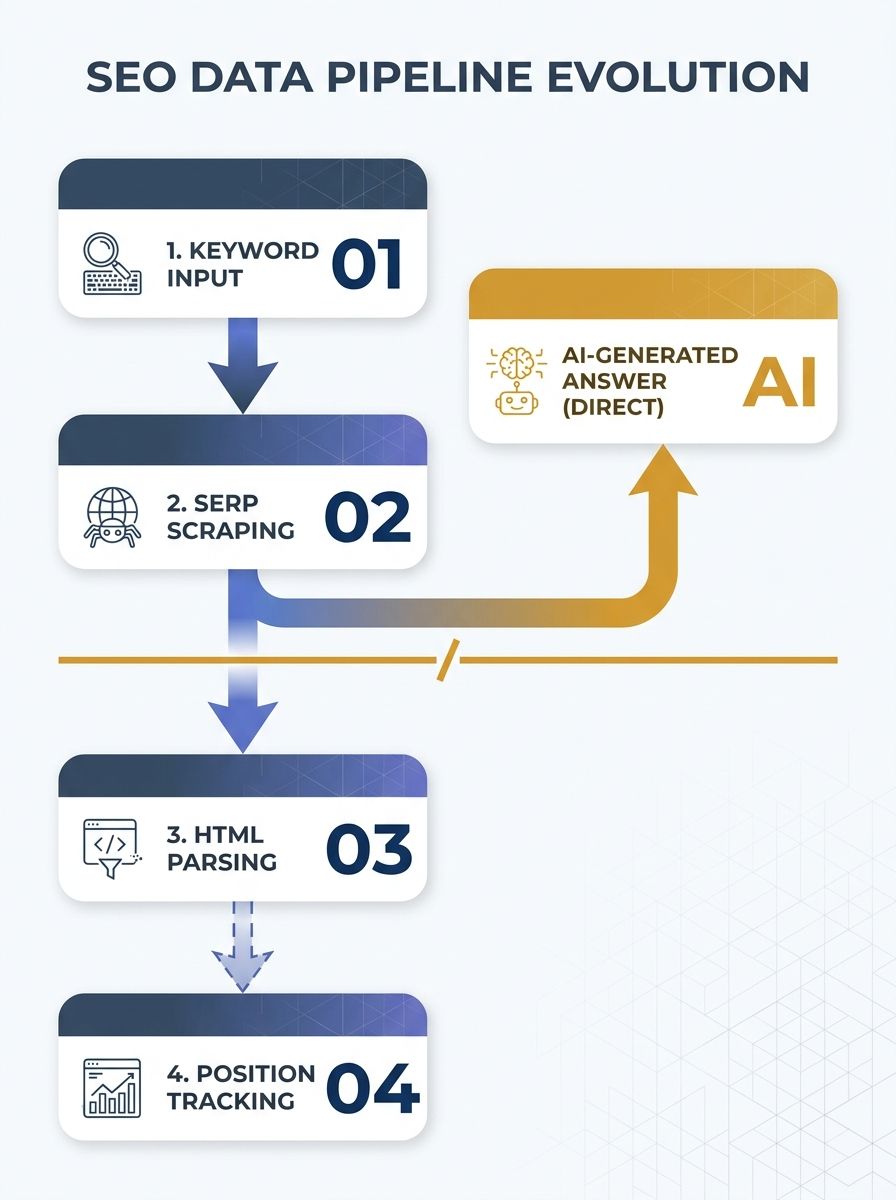

Every major SEO platform operates on the same basic loop. You give it a list of keywords. It sends automated queries to Google (and sometimes Bing). It parses the HTML of the returned SERP, identifies which URLs appear in positions 1 through 100, and logs that data over time. You get trend lines showing whether your pages are climbing or falling for each target keyword.

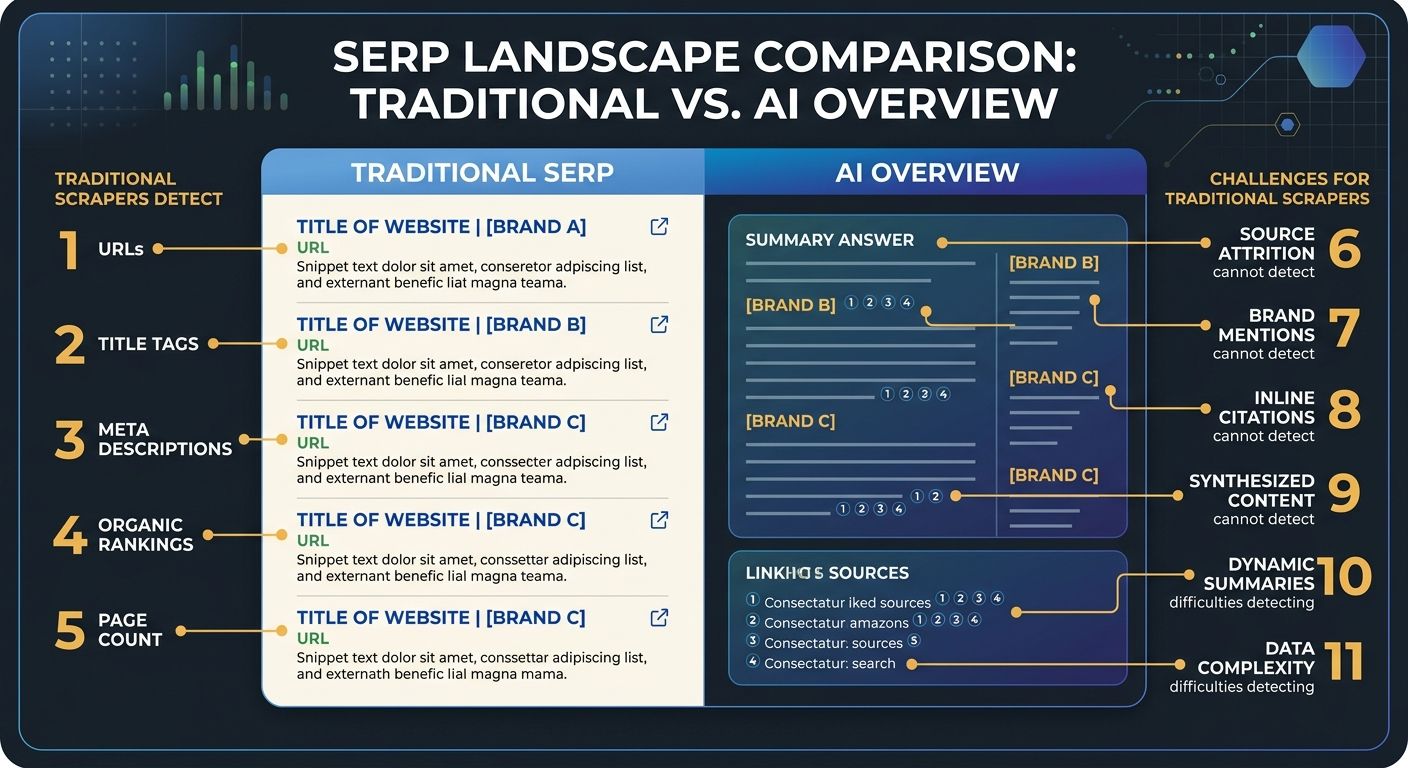

This works because traditional SERPs have a predictable structure. There's a ranked list of organic results, maybe some featured snippets, a local pack, some ads. The HTML elements that contain each result follow patterns that scrapers can reliably extract. Your tool knows a "position 3 ranking" because it can identify the third organic result container in the page source.

The entire data pipeline depends on two assumptions: that search results are URL-based, and that ranking position is the primary signal of visibility. Both assumptions fail when an AI engine generates a synthesized answer instead of a ranked list.

The Measurement Architecture of Answer Engines

When someone asks Perplexity "what CRM should a small law firm use" or triggers a Google AI Overview for the same query, the response isn't a ranked list of URLs. It's a generated paragraph (or several paragraphs) that may cite sources, mention brands by name, or link to supporting pages. The structure varies by platform and by query. ChatGPT might mention three brands in a flowing paragraph with no links at all. Google's AI Overview might include inline citations to four domains. Perplexity typically provides numbered source references at the bottom.

Your traditional SEO tool sees none of this. When Ahrefs scrapes the SERP for "best CRM for small law firms," it reads the organic results below the AI Overview. It might even detect that an AI Overview exists and note its presence. But it doesn't parse who got cited inside that AI-generated block. It doesn't track whether your brand was named. It doesn't measure whether the AI recommended your product or your competitor's.

Research shared at BrightonSEO San Diego quantified the disconnect: only 19% of traditional SEO ranking factors correlate with how AI tools choose which businesses to recommend. That means 81% of the signals your traditional platform tracks are measuring something with minimal relevance to answer engine visibility.

This is the architectural core of the blindspot. Your tool is reporting positions in a game that fewer users are playing. Google AI Overviews now appear in roughly 29% of searches, and when they're present, click-through rates to the top organic result drop by up to 79%. If you're tracking only organic position, your dashboard shows a stable #1 ranking while your actual visibility craters beneath a generated answer that doesn't mention you.

Four Metrics Your Platform Doesn't Report

The answer engine optimization metrics that matter in this environment fall into categories that traditional tools were never architected to capture. I've seen clients with stable rankings and declining revenue, and when we dug into these four areas, the cause became obvious.

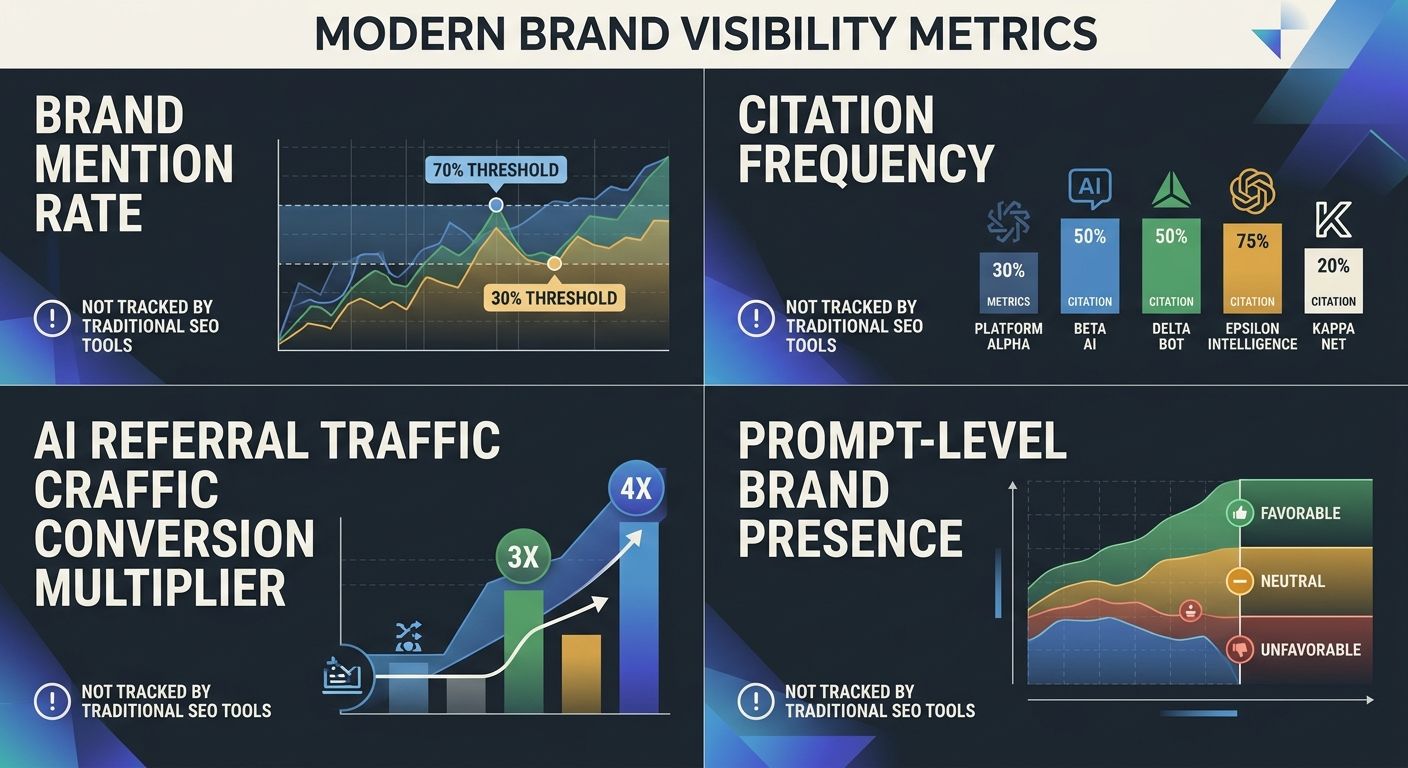

Brand Mention Rate

When an AI engine generates 100 answers about your category, what percentage mention your brand? According to Search Engine Land's analysis of AI visibility signals, scores above 70% indicate strong AI search performance, while scores below 30% signal serious visibility problems. No traditional SEO tool reports this number because calculating it requires prompting AI engines with category-relevant queries and analyzing the generated text for brand references. This is fundamentally different from checking whether a URL appears in a ranked list.

Citation Frequency and Source Attribution

Different answer engines cite sources differently. Google AI Overviews embed links inline. Perplexity provides numbered references. ChatGPT sometimes names sources, sometimes doesn't. Tracking whether your domain appears as a cited source across these platforms requires monitoring each engine separately and parsing unstructured text responses. Platforms like LLMrefs have emerged to track citations across ChatGPT GPT-5, Google AI Overviews, Gemini, Perplexity, Claude, Grok, and Copilot, but integrating this data with your existing SEO workflow remains a manual process.

AI Referral Traffic Quality

Data from early tracking efforts suggests that users arriving via AI citations convert at 3-4x the rate of traditional search traffic. The reasoning is straightforward: the AI has already contextualized your brand as relevant to the user's specific need, so the visitor arrives with higher intent and more context. Google Analytics can capture this referral traffic if you configure the right source filters, but your SEO platform doesn't connect that data to your keyword tracking in any meaningful way. I worked with an ecommerce client whose AI referral conversion rate was 4.1x their organic average, and they had no idea until we set up separate referral source segments.

Prompt-Level Brand Presence

This is the metric that has no analog in traditional SEO. For a given user prompt ("best project management tool for remote teams"), does the AI engine include your brand in its answer? And does it position your brand favorably, neutrally, or as a secondary option? Tracking this requires running actual prompts against live AI engines and analyzing the output. The variability is high, since the same prompt can produce different answers on different days depending on the model version and conversation context.

How New AI Answer Engine Tracking Tools Approach the Problem

A growing category of platforms is attempting to close this gap, and understanding how they work reveals both their value and their current limits.

The basic architecture is different from traditional SEO tools. Instead of scraping HTML for ranked URL positions, these tools submit queries to AI engines through APIs or interfaces, capture the generated text responses, and then analyze those responses for brand mentions, source citations, and sentiment. Tools like Rankscale group performance metrics, competitor insights, and citation data into brand dashboards that look nothing like the keyword position tables you're used to. Frase takes a content-creation-first approach, connecting the writing and optimization process directly to both SEO and generative engine optimization tracking in a single workspace.

If you've been exploring how to build a monitoring stack for this, we covered the practical setup in detail in our piece on building an AI answer engine tracking stack. The short version: you need a combination of AI-specific tracking tools, modified Google Analytics configurations, and a manual auditing process that no single platform has fully automated.

The measurement cadence matters too. AI engine responses change more frequently and less predictably than traditional SERPs. A weekly monitoring cadence for high-priority queries, monthly for brand-level trends, and quarterly for strategic reviews seems to be the emerging standard among teams I've worked with. This is a fundamentally different rhythm from the daily rank tracking most SEO teams are accustomed to, and it connects to the broader challenge of adapting your content strategy as search behavior shifts.

What Changes for Content Strategy

When you can actually measure AI visibility, the feedback loop changes what kind of content you produce. Traditional SEO rewarded keyword-optimized long-form pages. Answer engine visibility rewards content that's structured to be cited: clear definitions, specific statistics, named entities with consistent descriptions, and claims backed by external sources.

This shift connects directly to how you structure your topic architecture. If your content clusters are organized around keywords but lack the entity clarity and citation-ready formatting that AI engines prefer, you'll maintain traditional rankings while becoming invisible in the answer layer that sits on top of them. Princeton researchers found that adding structured citations to content increases AI visibility by 115%, which tells you something important about the gap between "ranks well" and "gets cited."

The trust signals dimension is equally critical. AI engines weight external consensus heavily. They pull from sources that other authoritative sites reference, which means your brand authority and third-party mentions influence AI visibility in ways that have no direct parallel in traditional ranking factors.

Where the Tracking Model Still Falls Short

The new generation of AI answer engine tracking tools solves real problems, but the mechanism has structural weaknesses worth understanding before you rebuild your measurement practice around them.

Reproducibility is low. The same prompt submitted to the same AI engine 24 hours apart can produce different answers with different brand mentions and different source citations. This makes trend data noisy. A brand mention rate of 45% this week and 38% next week might reflect a real change in visibility, or it might reflect normal variation in model outputs. Traditional rank tracking fluctuates daily too, but the variance band is much narrower, and you can average across days with confidence. AI visibility metrics need larger sample sizes across more prompts to produce stable signals, and most tracking tools don't yet make that sampling methodology transparent.

Coverage is incomplete. Dedicated platforms track major models, but AI search is fragmenting rapidly. Voice assistants, in-app AI features, and vertical AI tools (like AI-powered travel planners or healthcare assistants) are generating answers that reference brands without any centralized tracking mechanism. You can monitor ChatGPT and Perplexity. You can't monitor every surface where an LLM might mention or omit your brand.

Attribution remains messy. When someone reads about your brand in a ChatGPT response and then visits your site by typing your URL directly, that shows up as direct traffic in your analytics. The AI referral disappears. Some AI platforms pass referral headers, some don't. As The Verge documented, outlets like Digital Trends and ZDNet experienced traffic declines exceeding 90% from their peaks, partly because of this exact attribution problem layered on top of AI Overviews cannibalizing clicks. Understanding the full scope of these SEO tool limitations in 2026 requires acknowledging that the measurement gap runs deeper than what any single new platform has solved.

The optimization feedback loop is slow. When you optimize a page for traditional SEO, you can track ranking changes within days or weeks. When you optimize content for AI citation, the model might not incorporate those changes until its next training data refresh or retrieval index update. For models without real-time retrieval, that delay could stretch to months. This makes iterative testing painfully slow compared to what SEO teams are accustomed to.

And the most fundamental limitation: these tools can tell you whether you're being cited, but they can't fully explain why. Traditional SEO has decades of research on ranking factors, confirmed signals, and algorithmic patterns. Answer engine optimization is still in its empirical phase. We know that structured data, entity clarity, and external brand mentions correlate with AI visibility. We don't yet have the equivalent of PageRank or E-E-A-T frameworks with documented causal pathways. The practitioners building AI answer engine tracking systems are working with correlations, not confirmed mechanisms, and the gap between those two things matters when you're making budget decisions.

The architectural mismatch between how traditional SEO platforms collect data and how AI engines serve answers to users isn't something a software update will patch. It reflects a structural change in how search works. The tools will mature, the data will stabilize, and the tracking methodologies will become more rigorous. But right now, any marketing team relying exclusively on traditional rank tracking is operating with a blind spot that grows wider every quarter as AI-generated answers consume a larger share of the search experience. Building measurement capability for both layers of visibility is the only way to see the full picture of how your audience actually finds you.

Sarah Chen

SEO strategist and web analytics expert with over 10 years of experience helping businesses improve their organic search visibility. Sarah covers keyword tracking, site audits, and data-driven growth strategies.

Explore more topics