The SEO Data Blind Spot: Why Your Case Studies Show Growth But Your Analytics Tell a Different Story

Estimated SEO traffic and actual Google Analytics sessions diverge by an average of 61.58%, according to a detailed accuracy analysis of SEMrush data. That single number should make every SEO professional reconsider how case studies get assembled.

The SEO Data Blind Spot: Why Your Case Studies Show Growth But Your Analytics Tell a Different Story

Estimated SEO traffic and actual Google Analytics sessions diverge by an average of 61.58%, according to a detailed accuracy analysis of SEMrush data. That single number should make every SEO professional reconsider how case studies get assembled. I've spent the better part of eight years building marketing reports for enterprise brands, and the pattern I keep seeing is consistent: the narrative in the case study deck says "organic growth," while the analytics platform behind it tells a muddier, less flattering story. Understanding how we got here requires walking through the specific events that broke the relationship between SEO reporting and reality.

When All the Numbers Still Agreed

Before July 2023, the SEO reporting ecosystem operated on a set of shared assumptions. Universal Analytics provided session-based tracking that most teams understood. Third-party tools like SEMrush and Ahrefs estimated traffic using rank-tracking data and clickstream panels. Google Search Console delivered impression and click counts from Google's own index. The numbers from these three sources never perfectly aligned, but they told roughly the same directional story. If Search Console showed clicks going up, GA usually confirmed it with sessions trending the same way, and third-party estimates moved in a similar arc.

Case studies from this era had a relatively simple job: pull the GA line chart, overlay it with ranking improvements from your SEO tool of choice, and present a clean growth narrative. The methodology had gaps, but the gaps were small and predictable enough that nobody questioned them aggressively.

The GA4 Migration Cracked the Foundation

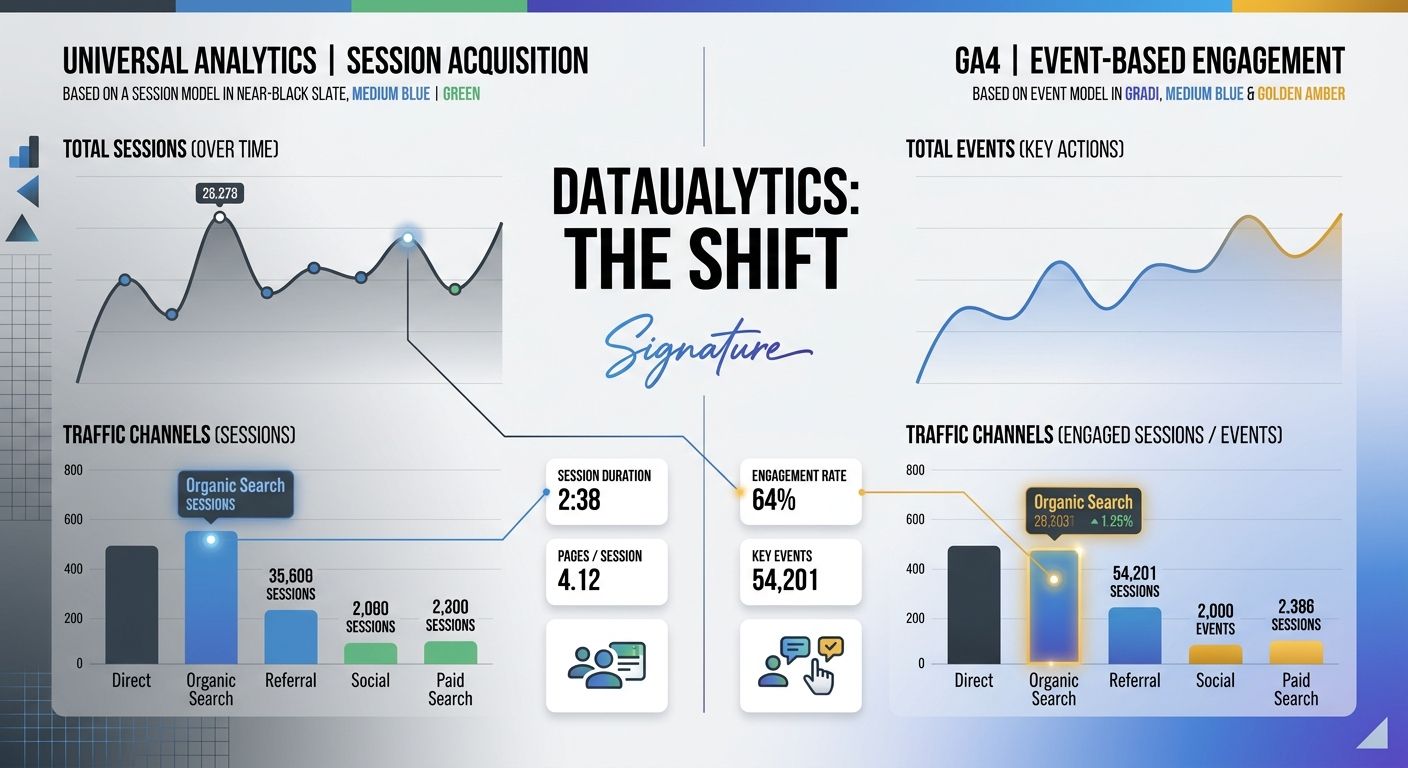

Google's forced migration from Universal Analytics to GA4 changed the measurement model entirely. Sessions became events. Attribution windows shifted. Default channel groupings were redefined. And the practical effect was that organic traffic numbers in GA4 frequently didn't match what Universal Analytics had been reporting for the same site, even during overlapping tracking periods.

GA4 tracking errors became a persistent issue for marketing teams. Misconfigured data streams, missing event parameters, and broken cross-domain tracking silently corrupted data for months before anyone noticed. As documented by Ignite Visibility, even validating GA4 data streams through real-time reports became a necessary troubleshooting step that many teams skipped entirely.

The downstream effect on case studies was significant. Teams that had been tracking a client's organic growth through Universal Analytics suddenly had to rebuild their baselines in GA4. Some chose to stitch the data together manually. Others started their "growth" timelines at the GA4 implementation date, conveniently ignoring any pre-migration context. Both approaches introduced distortion.

If your organization went through this transition without a formal analytics tool audit, the numbers you're looking at today may carry inherited measurement debt you've never accounted for.

The Quiet Problem of Orphaned Data

Beyond the migration itself, data quality in SEO reporting took a hit from structural issues that predated GA4 but got worse after the switch. Orphaned records, broken referral chains, and duplicated contact entries created what Metaplane describes as a domino effect across downstream analytics: revenue reports became inaccurate because they couldn't properly attribute sales, lifetime value calculations excluded valid transactions, and the damage often sat undetected for months.

I audited one B2B SaaS client's GA4 property in late 2025 and found that 23% of their organic landing page data was being attributed to "(direct) / (none)" due to a missing referral exclusion list. Their SEO case study from Q3 2025 claimed a 41% lift in organic sessions. After correcting the attribution, the real number was closer to 17%. Still good. But a very different story than the one that went into the board presentation.

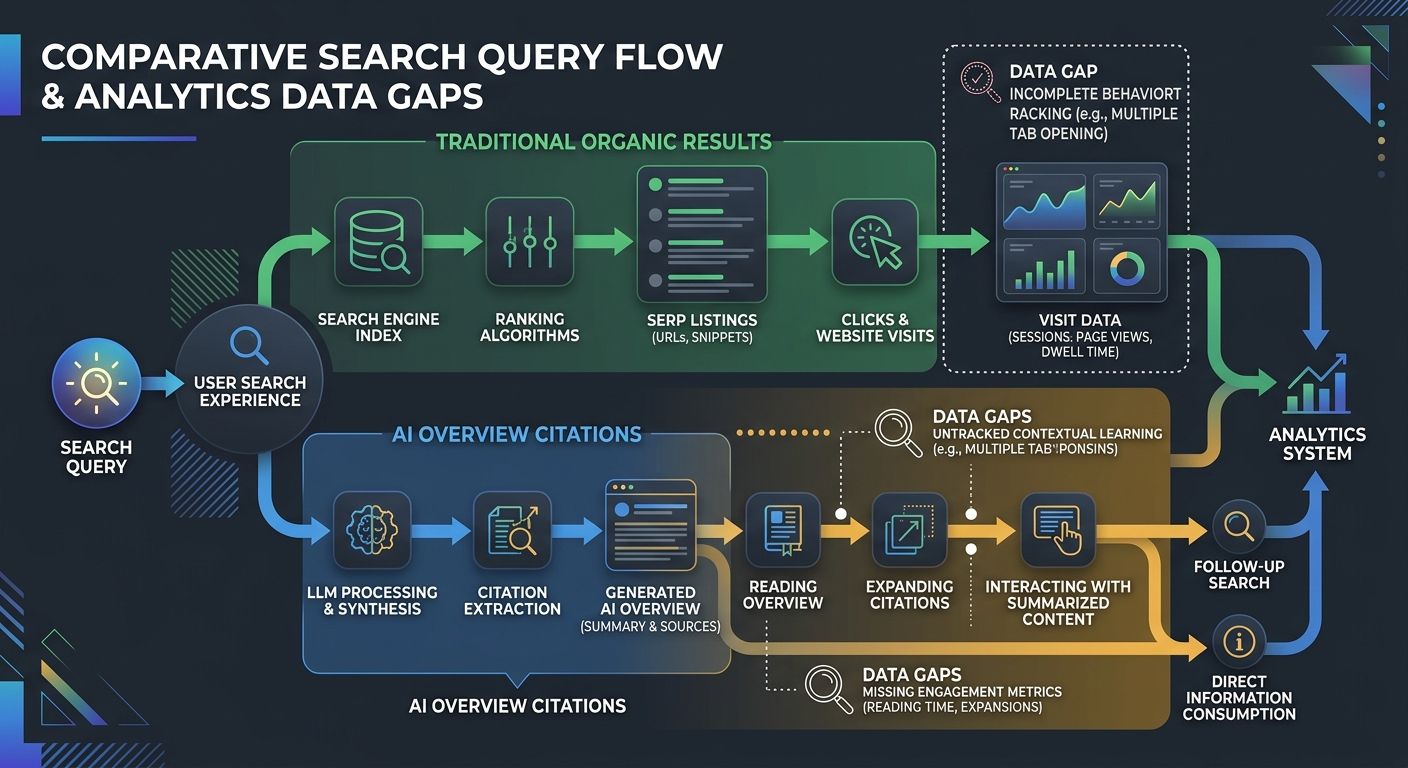

AI Overviews Scrambled the Attribution Model

The second structural break happened as AI-generated search results expanded through 2025 and into 2026. AI Overviews now cite sources from outside the organic top 10 in 83% of cases, according to recent SERP analysis. This means a page can earn visibility, get cited in an AI summary, and drive brand awareness without generating a single trackable click.

Traditional SEO case studies measure success through rankings, clicks, and sessions. When a meaningful portion of your SEO impact happens in zero-click environments, those metrics miss the contribution entirely. The attribution gap, as The Drum reported, is "breaking SEO reporting" because influence has moved upstream into AI-driven discovery environments that create a blind spot around what actually drives visibility and revenue.

This problem compounds the GA4 measurement issues. You now have one system (GA4) that undercounts organic traffic due to configuration errors, and a second structural force (AI Overviews) that generates SEO value your analytics can't see at all. The case study shows a ranking improvement and calls it a win. The analytics show flat or declining sessions and call it a plateau. Both are telling partial truths.

Teams working on zero-click SEO strategies are particularly vulnerable to this disconnect, because the value they generate is real but nearly invisible to standard reporting.

The Case Study Methodology Problem

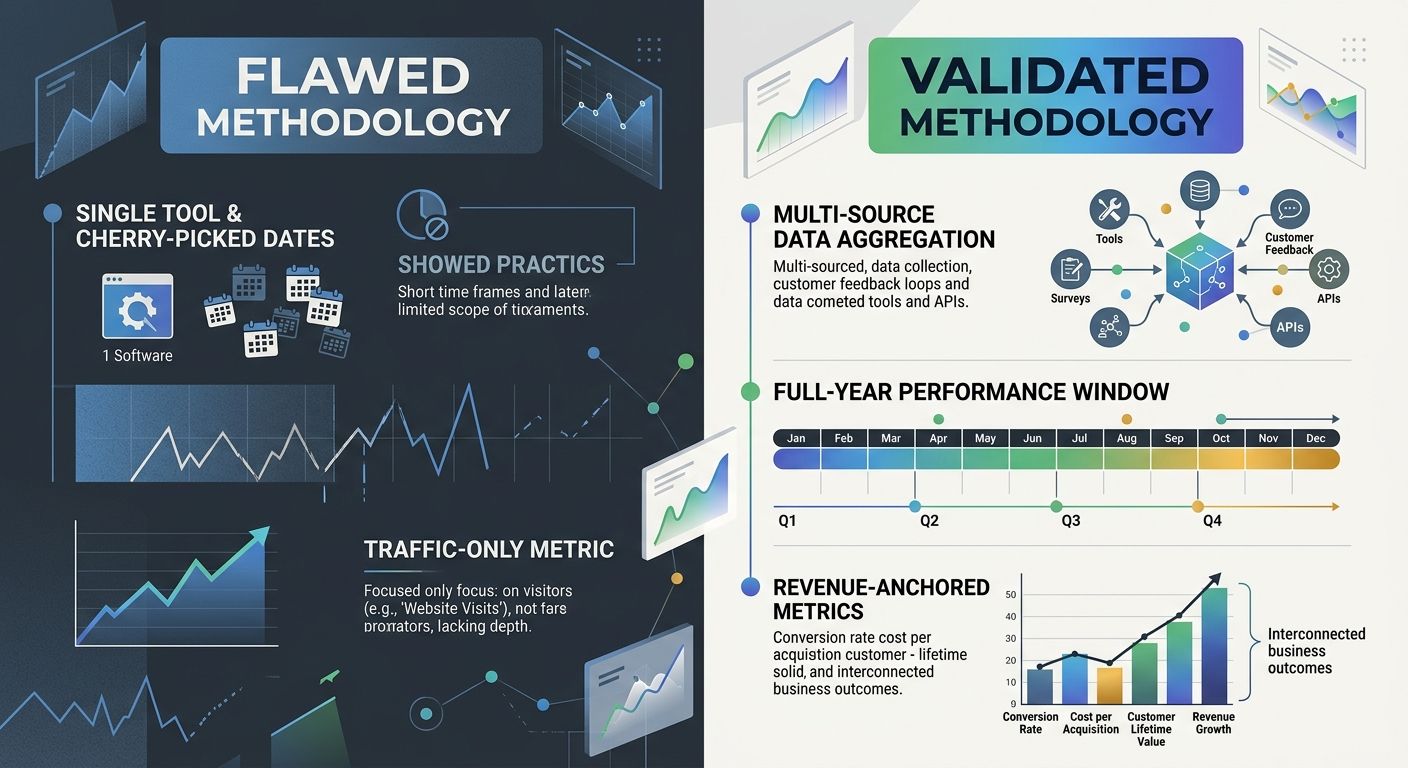

With broken measurement as the backdrop, the way SEO case studies get built makes the blind spot worse. I've reviewed dozens of published case studies from agencies and in-house teams, and the same methodological patterns keep appearing.

Cherry-Picked Time Windows

The most common marketing analytics mistake in case study construction is selecting a time window that flatters the narrative. A 90-day window starting right after a Google core update recovery will show dramatic gains. Extend that window to six months or twelve months, and the picture often normalizes. The March 2026 core update, which we covered in our analysis of content authenticity and rankings, reshuffled a massive portion of search results. Any case study that starts its measurement window in April 2026 is riding a wave of volatility, not demonstrating sustainable strategy.

Tool Estimate Inflation

A cross-platform study of over 7,500 websites found that for smaller sites with fewer than 5,000 monthly visits, SEMrush estimates often overshoot actual traffic by a substantial margin. When a case study reports "estimated organic traffic" from a third-party tool rather than actual GA4 session data, it's building its growth narrative on numbers that may be inflated by 30% to 60% or more.

Single-Metric Focus

Ranking improvements without conversion data. Traffic growth without revenue attribution. Impression gains without click-through analysis. Each of these tells an incomplete story, and yet each appears regularly in published case studies. The teams producing these reports aren't being dishonest. They're working within the constraints of what their tools can measure. But the result is the same: a growth narrative disconnected from business outcomes.

If your reporting infrastructure suffers from these patterns, the data quality crisis in marketing analytics is already affecting your decision-making, whether you've noticed it or not.

Where the Numbers Stand Today

The SEO analytics validation problem isn't going to fix itself with better tools alone. The structural forces driving the disconnect are accelerating: AI-generated search results are expanding, privacy regulations continue to erode cookie-based tracking, and the gap between estimated and actual traffic keeps widening.

What does a credible SEO case study look like in this environment? Based on the patterns I've seen work across enterprise clients, it requires three things.

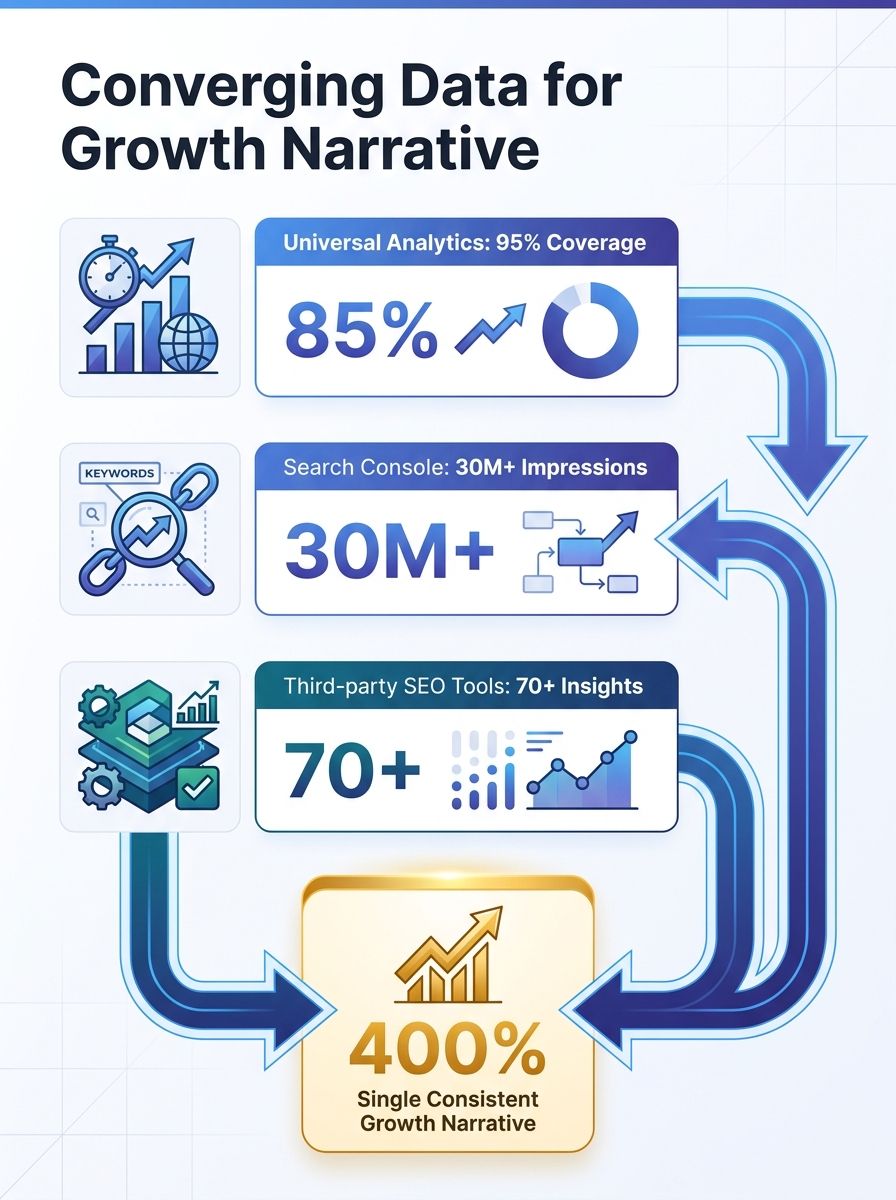

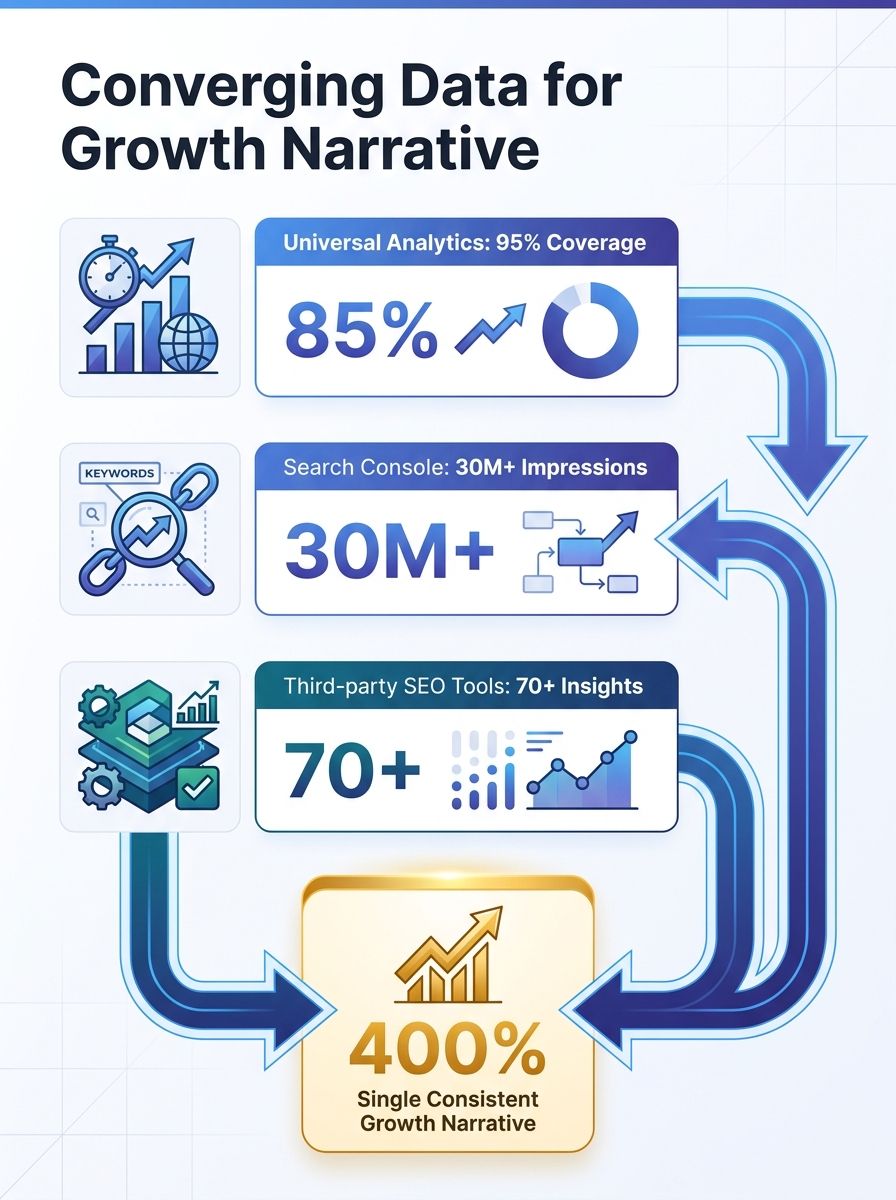

First, multi-source triangulation. No single platform serves as ground truth. GA4 data should be cross-referenced against Search Console clicks, server log data where available, and conversion events tracked independently. When the numbers from three sources agree directionally, you have a finding. When they don't, you have a question that needs investigation before it becomes a slide in a deck.

Second, explicit uncertainty reporting. As Search Engine Journal has noted, uncertainty in analytics comes from the way the tools operate, and acknowledging it doesn't undermine credibility. The strongest case studies I've seen include confidence intervals or at minimum a clear statement about what the data can and cannot confirm. Saying "organic sessions grew between 15% and 22% depending on attribution model" is more useful than saying "organic traffic grew 41%."

Third, business outcome anchoring. Every metric in a case study should connect to a revenue or pipeline figure. If you can't draw that line, the metric belongs in a diagnostic appendix, not in the executive summary. Ranking position is a diagnostic tool. Impressions are a diagnostic tool. Revenue, pipeline value, and conversion rates are outcomes. The distinction matters enormously when you're using a case study to justify continued SEO investment, and even more when you're debugging scenarios where technical SEO checks pass but rankings still decline.

The blind spot between what case studies claim and what analytics confirm has been widening for three years. The teams that close it will be the ones who treat measurement rigor with the same seriousness they bring to keyword research and content strategy. The rest will keep producing impressive-looking decks that fall apart the moment someone asks where the revenue went.

Alex Chen

Alex Chen is a digital marketing strategist with over 8 years of experience helping enterprise brands and agencies scale their online presence through data-driven campaigns. He has led marketing teams at two successful SaaS startups and specializes in conversion optimization and multi-channel attribution modeling. Alex combines technical expertise with strategic thinking to deliver actionable insights for marketing professionals looking to improve their ROI.

Frequently Asked Questions

- Why do SEO case studies show different numbers than Google Analytics?

- Estimated SEO traffic from tools like SEMrush and actual Google Analytics sessions diverge by an average of 61.58%. The gap widened after the GA4 migration changed how sessions are tracked, and AI Overviews now generate SEO value in zero-click environments that analytics can't measure, creating blind spots between what case studies claim and what data confirms.

- How did the GA4 migration affect SEO reporting accuracy?

- Google's migration from Universal Analytics to GA4 changed the entire measurement model—sessions became events, attribution windows shifted, and default channel groupings were redefined. This caused organic traffic numbers in GA4 to frequently not match Universal Analytics data, and many teams introduced distortion by either manually stitching data together or starting growth timelines at the GA4 implementation date.

- What is the most common mistake in SEO case study construction?

- Cherry-picking favorable time windows is the most common mistake, such as starting measurement right after a Google core update recovery to show dramatic gains. Extending the measurement window to six or twelve months typically reveals a more normalized picture, making the original narrative misleading about strategy effectiveness.

- How much do SEMrush traffic estimates overstate actual traffic?

- For smaller sites with fewer than 5,000 monthly visits, SEMrush estimates often overshoot actual traffic by 30% to 60% or more according to a cross-platform study of over 7,500 websites. This inflation distorts case study narratives when teams report estimated traffic rather than actual GA4 session data.

- What percentage of GA4 data gets misattributed due to configuration errors?

- In a B2B SaaS client audit, 23% of organic landing page data was being attributed to "(direct) / (none)" due to a missing referral exclusion list, causing a claimed 41% session lift to actually be closer to 17%. The default GA4 configuration misattributes traffic more often than most teams realize.

- How do AI Overviews impact SEO measurement and attribution?

- AI Overviews cite sources from outside the organic top 10 in 83% of cases, allowing pages to earn visibility and drive brand awareness through zero-click environments without generating trackable clicks. This creates a blind spot in traditional SEO metrics like rankings, clicks, and sessions that miss the actual value generated.

- What should a credible SEO case study include to be accurate?

- A credible case study requires multi-source triangulation (cross-referencing GA4, Search Console, and server logs), explicit uncertainty reporting (confidence intervals or attribution model ranges), and business outcome anchoring (connecting metrics to revenue or pipeline figures rather than just rankings or impressions).

Explore more topics