Why Your Technical SEO Passes but Rankings Still Drop: Debugging the Hidden Disconnect

A client sent me their Screaming Frog crawl report with a single-line email: "Everything is green. We lost 41% of organic traffic anyway." I pulled up their data and confirmed it. Zero crawl errors. Core Web Vitals in the green across all three metrics. Clean canonical tags. Valid structured data.

Why Your Technical SEO Passes but Rankings Still Drop: Debugging the Hidden Disconnect

A client sent me their Screaming Frog crawl report with a single-line email: "Everything is green. We lost 41% of organic traffic anyway." I pulled up their data and confirmed it. Zero crawl errors. Core Web Vitals in the green across all three metrics. Clean canonical tags. Valid structured data. XML sitemap properly submitted. And yet, 23 of their top-50 keywords had fallen off page one over six weeks.

This is the most frustrating pattern in SEO, and I see it at least once a month. The technical SEO audit looks clean, the team assumes something mysterious happened, and everyone starts guessing. But there's nothing mysterious about it. The problem is that most audits test whether your site is crawlable and indexable when what actually determines rankings is far broader than that. Technical health is necessary but wildly insufficient.

Let me walk you through the exact diagnostic framework I use when a site passes every technical check and still bleeds rankings.

The Audit Gap Nobody Talks About

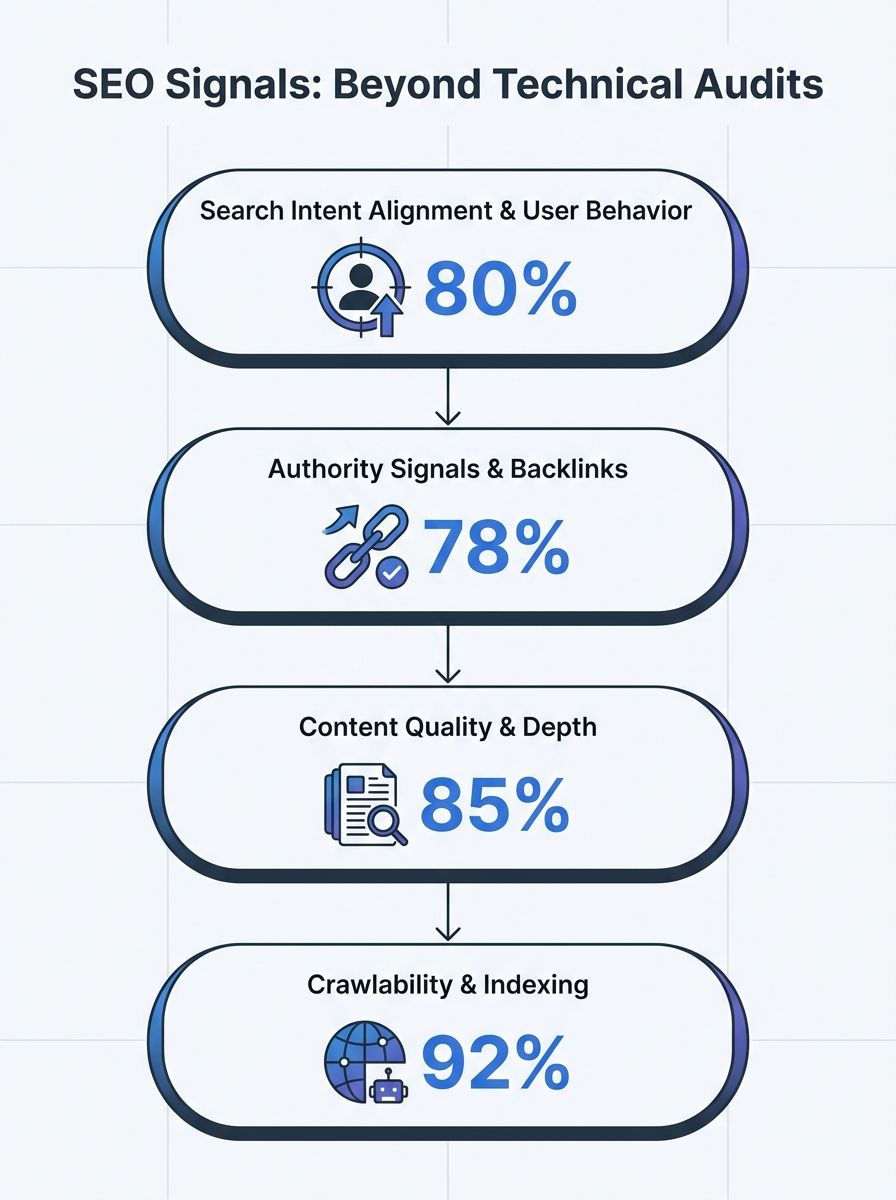

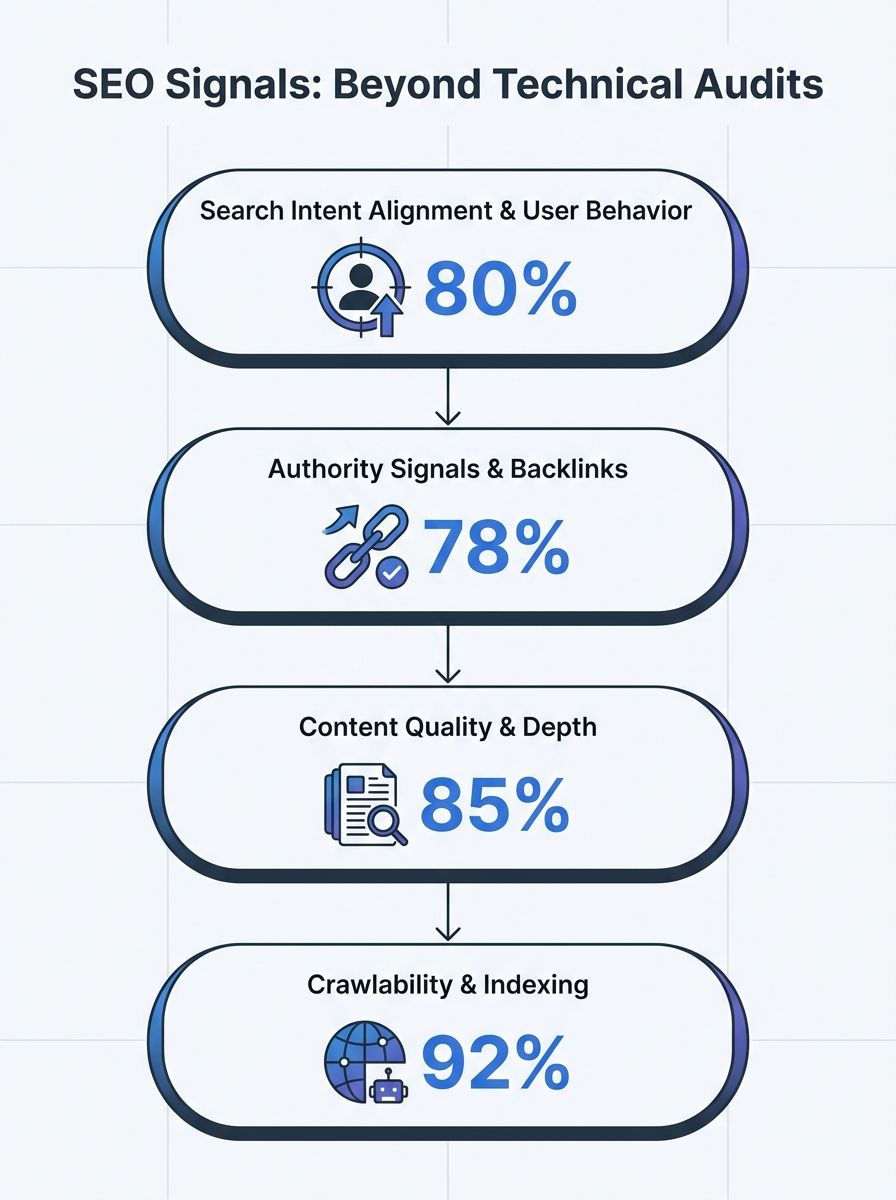

Here's the uncomfortable truth: a standard technical SEO audit tests maybe 30% of the signals Google actually weighs. Crawlability, indexability, page speed, mobile-friendliness, HTTPS, structured data. These are the table stakes. You need them, but they don't earn you anything.

Think of it like a restaurant health inspection. Passing means your kitchen isn't going to make someone sick. It doesn't mean the food is good, the menu is compelling, or anyone wants to eat there. Most technical SEO audit gaps live in exactly this blind spot.

According to analysis from NAV43's research on technical vs. content SEO, websites with high-quality, optimized content typically experience 3-4x higher conversion rates compared to sites focusing solely on technical elements. But that content advantage only materializes when the technical foundation is solid. It's a both/and situation, not either/or. The disconnect happens when teams treat the technical audit as the finish line instead of the starting line.

The Five Hidden Layers Behind a Ranking Drop

When I run a ranking drop diagnosis for a client, I'm not re-running the technical audit. I'm investigating five layers that traditional crawlers never touch.

Layer 1: Search Intent Has Shifted Under You

This is the most common culprit, and the hardest to detect with automated tools. Your page was written to match informational intent. But Google has quietly started serving transactional results for the same query. Your content hasn't changed. The SERP has.

I had a client in the B2B SaaS space whose "what is" articles were steadily dropping. When I pulled the SERPs, every first-page result had shifted to comparison pages and pricing tables. Google had reclassified the intent, and their informational content no longer matched. If you've experienced this, you'll find the diagnosis framework in this deep dive on search intent mismatch extremely relevant.

Actionable takeaway: Pull the top 10 results for every keyword that dropped. Classify the intent type (informational, transactional, navigational, commercial investigation). If the dominant intent no longer matches your page, no amount of technical optimization will save it. You need to rebuild the content.

Layer 2: E-E-A-T Signals Are Weak or Missing

Google's quality raters use Experience, Expertise, Authoritativeness, and Trustworthiness as core evaluation criteria in the Search Quality Evaluator Guidelines. These aren't direct algorithmic ranking factors in the way PageRank is. They're signals that Google's systems approximate through proxy indicators.

And here's where most sites fail without realizing it. E-E-A-T signals SEO problems look like this:

No author bylines on content pages

Author pages that exist but contain no credentials, no links to external profiles, no evidence of expertise

No structured data identifying who wrote the content (JSON-LD Person/Author markup)

Content written by "the team" or "admin" with zero human identity

No topical authority built through supporting content clusters

The richer your internal linking network and author profiles, the stronger your expertise signal becomes. Google wants to know who is speaking. When I audit for content authority vs technical optimization gaps, the author infrastructure is the first thing I check.

Actionable takeaway: Build real author pages with verified credentials. Add JSON-LD Person schema. Link to external profiles (LinkedIn, industry publications). Show Google a real human with demonstrable expertise wrote this content.

Layer 3: Core Update Recalibration, Not Penalty

Core updates don't penalize. They recalibrate. This distinction matters enormously for diagnosis.

When a core update drops your rankings, Google hasn't decided your site is bad. It's decided other sites are relatively better across the quality signals it now weighs more heavily. Your absolute quality hasn't changed. Your relative position has.

As research from eSign Web Services explains, ranking drops typically aren't caused by a single dramatic factor. It's how behavior, intent, infrastructure, trust, and updates stack up together. This is why isolating the cause feels so difficult. The March 2026 core update, for example, hit sites that had thin supporting content and weak topical authority particularly hard. You can read more about how the March core update reshaped competitive rankings if you were affected.

Core update vulnerability almost always traces back to one of three things: content depth compared to competitors, user engagement metrics (particularly return-to-SERP rates), or trust signals. Never just technical health.

Actionable takeaway: After any core update, compare your dropped pages side-by-side with the pages that replaced you. Look at word count, content depth, author credentials, freshness, and supporting internal links. The gap is your recovery roadmap.

Layer 4: Technical Drift Between Audits

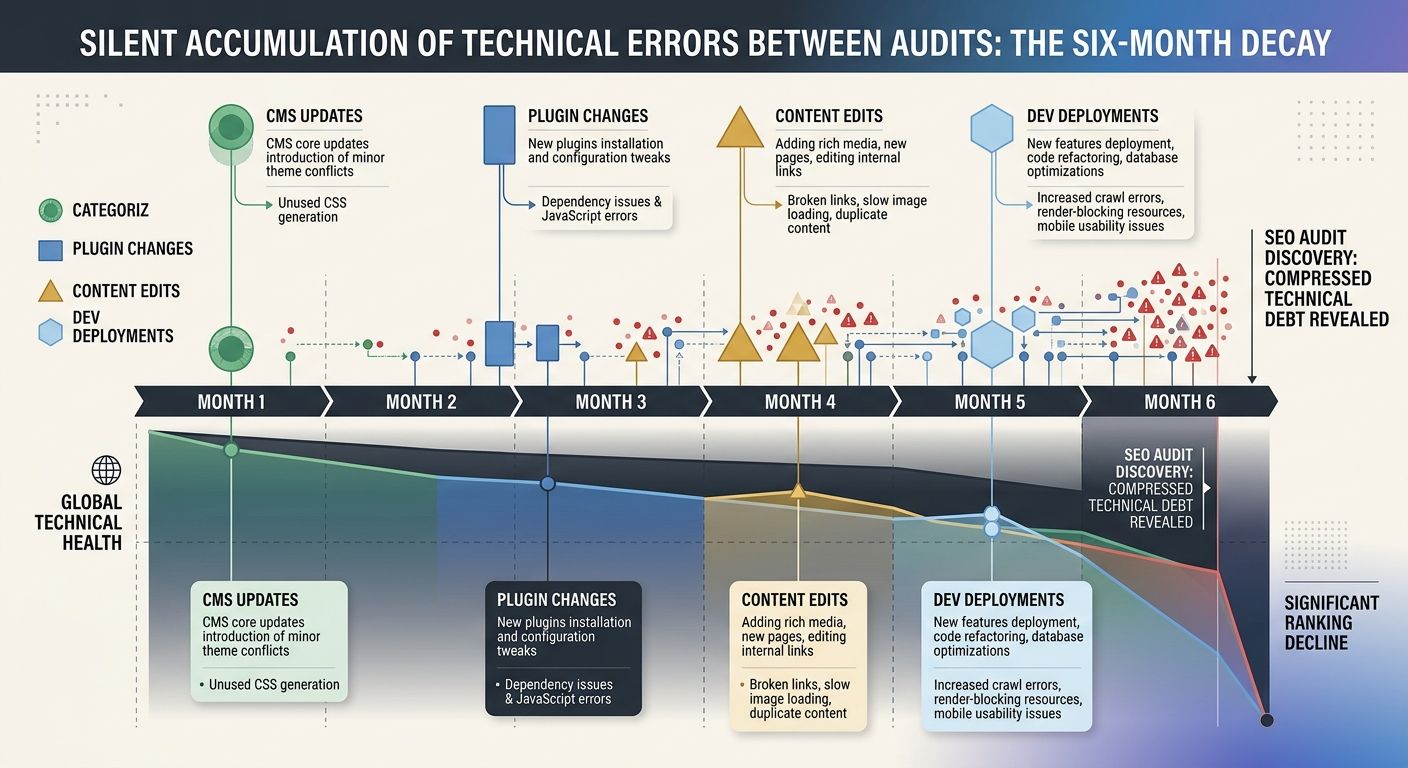

Your site passed the audit in Q1. What happened since then?

CMS updates, new plugins, theme changes, dev deployments, marketing team edits. I've seen a single WordPress plugin update break canonical tags across 4,000 pages without anyone noticing for weeks. Technical drift is silent and cumulative.

A solid technical SEO audit framework recommends running full deep audits at least twice a year, with lighter ongoing checks in between. Between those deep audits, you should be running weekly checks on Search Console errors and keyword rankings, monthly reviews of Core Web Vitals and crawl data, and quarterly content freshness assessments.

This is exactly why I push clients toward building a governance framework for technical SEO rather than treating audits as one-time events. Audits decay. Governance persists.

Actionable takeaway: Set up automated monitoring for canonical tags, indexation status, and rendering errors. Don't rely on periodic audits alone. The issues that kill rankings sneak in between audits.

Layer 5: Index Budget Bloat

Most SEO professionals understand crawl budget. Fewer think about index budget, and it's the more dangerous constraint.

Crawl budget is about bandwidth. How many URLs can Googlebot crawl in a given window. Index budget is about quality. How many URLs does Google consider worth indexing. Nearly 33% of pages across the web lack canonical tags, contributing to duplicate content and index dilution. When Google spends its index budget on your faceted navigation pages, parameter URLs, and thin tag archives, your important pages lose relative visibility.

I worked with an e-commerce client that had 180,000 indexed URLs. Only 12,000 were product or category pages that actually mattered. The rest were filter combinations, pagination sequences, and empty tag pages. Pruning the index down to quality pages led to a 28% increase in organic traffic within two months. No content changes. No new links. Just removing noise.

Actionable takeaway: Check your indexed page count in Search Console against the number of pages you actually want indexed. If the ratio is off, start pruning with noindex tags, canonical consolidation, or robots.txt directives.

The Diagnostic Framework: Where to Start

When a client comes to me with a clean technical audit and dropping rankings, I run this sequence in order. Skipping steps leads to misdiagnosis.

Verify the drop is real. Check if the keyword tracking tool changed its scraping location, if SERP features displaced your organic listing, or if the drop is seasonal. Sometimes the data lies, and I've written about why your analytics dashboard might not reflect reality.

Map the drop to a timeline. Did it align with a core update? A deployment? A competitor's new content? Check Google's confirmed update log against your ranking data.

Audit search intent alignment. Pull current SERPs for every dropped keyword. Compare the dominant page type against your page type.

Evaluate E-E-A-T signals. Author pages, structured author data, content depth, topical coverage, external citations.

Check for technical drift. Re-crawl and compare against the previous audit. Look specifically at canonical tags, rendering output, internal link changes, and JavaScript loading behavior.

Assess index budget allocation. Compare indexed pages to intended pages. Identify bloat.

Benchmark against competitors who gained. This is where the real insights live. Don't just look at your own site. Look at who took your positions and what they do differently.

Why Content Authority Beats Technical Perfection

I've been saying this for years, and the data keeps proving it: technical SEO is the foundation, but content authority vs technical optimization is where rankings are actually won or lost.

A site that loads in 1.2 seconds with perfect Core Web Vitals but publishes generic, authorless content will lose to a slower site that demonstrates deep expertise and covers topics thoroughly. Every single time. The technical foundation ensures Google can find and render your content. Authority ensures Google wants to rank it.

This is why I always recommend teams resist the temptation to keep publishing volume-first content when rankings dip. The instinct is to publish more. The correct response is usually to publish less but better, with stronger author signals, deeper topical coverage, and clearer alignment with what searchers actually need.

Your Next Move

If your technical audit is clean but rankings are declining, stop re-running the same audit. The answer isn't in your crawl report. Pull your dropped keywords into a spreadsheet. For each one, answer three questions: Has the search intent changed? Who replaced me and what do they have that I don't? Is my content backed by a credible, identifiable author with demonstrable expertise?

Those three questions will surface the real problem faster than any crawler ever could. And once you know the actual cause, recovery becomes a strategic project instead of a guessing game.

Sarah Chen

SEO strategist and web analytics expert with over 10 years of experience helping businesses improve their organic search visibility. Sarah covers keyword tracking, site audits, and data-driven growth strategies.

Explore more topics