AI Overviews Are Reshaping Search Traffic Patterns: What Your 2026 Analytics Should Track Instead

LinkedIn's organic B2B traffic dropped 60% from non-branded queries after AI Overviews absorbed the clicks those rankings used to deliver.

AI Overviews Are Reshaping Search Traffic Patterns: What Your 2026 Analytics Should Track Instead

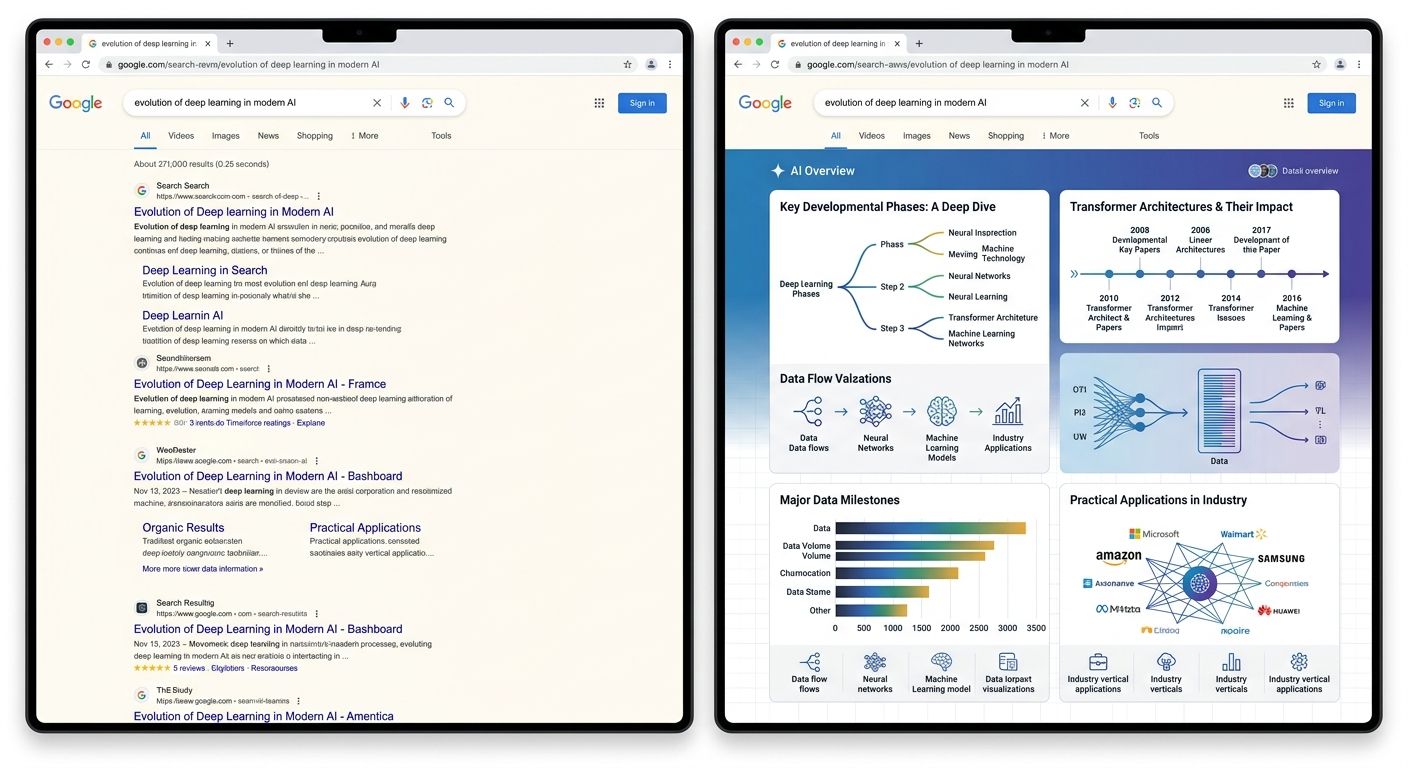

LinkedIn's organic B2B traffic dropped 60% from non-branded queries after AI Overviews absorbed the clicks those rankings used to deliver. The company's response, detailed during its February 2026 strategy overhaul, was to stop treating traffic as the primary KPI and start tracking citation frequency inside AI-generated answers instead. That pivot raised a question every marketing team now faces: which analytics framework actually captures what's happening in search?

BrightEdge data from February 2026 shows AI Overviews appearing on 48% of all tracked queries, up 58% year-over-year. The average AI Overview occupies over 1,200 pixels on desktop, pushing every organic result below the fold. Seer Interactive reported that organic CTR dropped from 1.76% to 0.61% on queries where AI Overviews appear, a 61% decline. And according to an Ahrefs study of 3,000 sites, 63% of websites now receive at least one visit from an AI chatbot, meaning a parallel traffic stream exists that most dashboards aren't segmenting at all.

This leaves marketing teams choosing between three distinct analytics approaches. Each one tracks different signals, requires different tooling, and suits different organizational maturity levels. I've been testing all three across client accounts since late 2025, and the tradeoffs are sharper than most vendor pitches suggest.

GA4 and Search Console Still Look Normal, and That's the Problem

The first approach is to keep doing what you've been doing: rely on GA4 for traffic data and Google Search Console for impressions, clicks, and average position. For many teams, especially those with limited budgets or small SEO operations, this feels like the safe default.

On the surface, it works. Rankings may hold steady. Impressions in Search Console might even increase, because Google still crawls and indexes your pages for AI Overview synthesis. The dashboard looks green. But the story those numbers tell has a growing gap between visibility and actual business impact.

When AI Overviews answer a query directly, your page can maintain its position-one ranking while losing the majority of its clicks. SparkToro data shows 58% of Google searches now end in zero clicks. Your impressions count goes up (because Google displayed your URL in the SERP), but the user never visits your site. GA4 doesn't distinguish between an impression that led to a click and one that got absorbed by an AI summary sitting 1,200 pixels above it.

If you've noticed a disconnect between what your SEO reports show and what your pipeline actually delivers, you're experiencing the exact phenomenon I covered when examining why analytics dashboards don't match actual performance. The metrics aren't wrong. They're measuring a reality that no longer correlates with revenue.

The tradeoff is clear. This approach costs nothing extra and requires no new tools. It works fine if your business model depends on branded queries (where AI Overviews are less disruptive) or if you're in an industry where AI Overviews trigger infrequently. Entertainment sits at just 37% AI Overview adoption. But for healthcare (88%), education (83%), or B2B tech (82%), relying solely on GA4 and Search Console means flying with instruments calibrated for weather conditions that no longer exist.

When This Approach Still Makes Sense

Small publishers with fewer than 50 pages of content and limited marketing spend can still get adequate signal from traditional tools. The key adjustment: create a custom GA4 segment filtering for organic traffic that converts (leads, purchases, signups), and track that trendline weekly instead of raw session counts. If conversion volume from organic drops while sessions stay flat, you've identified AI Overview click absorption without spending a dollar on new software.

What Dedicated AI Monitoring Platforms Actually Track

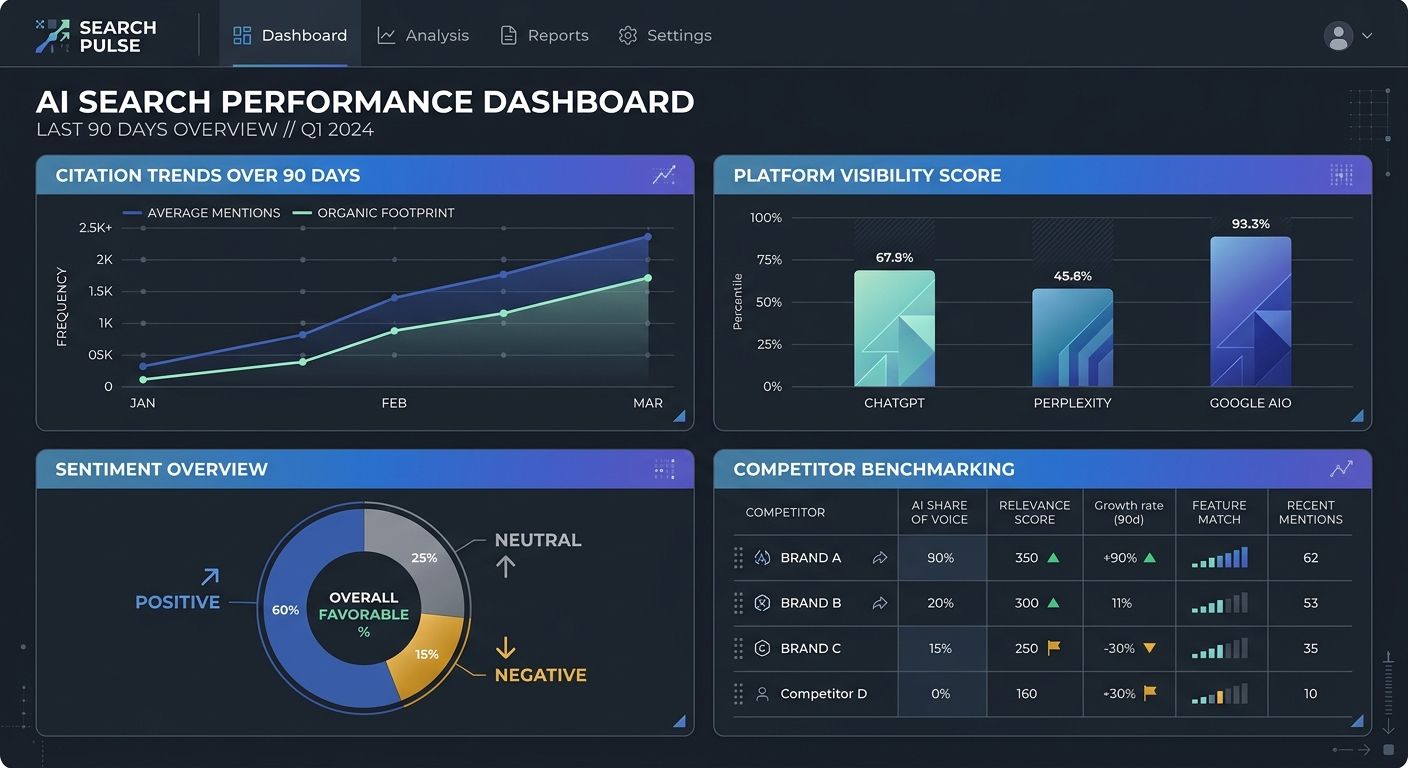

The second approach adds purpose-built tools that track brand visibility inside AI-generated responses. Platforms like Otterly, Semrush's AI Search Visibility Checker, and Omnia now monitor citations, mentions, and sentiment across ChatGPT, Perplexity, Claude, Gemini, Copilot, and Google's AI Overviews simultaneously.

These tools answer a fundamentally different set of questions. Instead of "how do we rank for keyword X?", they tell you: does ChatGPT mention our brand when a user asks about our product category? Are we being cited with a link, or just referenced by name? What's the sentiment of the context surrounding our mention? Which competitor appears more often in AI-synthesized answers?

This matters because the AI search engines SEO impact extends well beyond Google. DuckDuckGo processes roughly 100 million searches per day, and Brave Search handles over 50 million daily from its own independent index. Perplexity, ChatGPT with browsing, and Copilot have carved out meaningful search volume in their own right. If you're only tracking Google, you're missing a growing share of the discovery layer. I've worked with client brands that were completely absent from AI search results despite holding strong traditional rankings, and the visibility gap only became apparent after deploying dedicated monitoring.

The platforms typically track:

AI Overview trigger rate for your target keywords

Citation frequency and which specific URLs get cited

Citation positioning within the AI-generated answer (first source vs. third source cited)

Linked vs. unlinked mentions, a crucial distinction since unlinked brand references still drive awareness but won't appear in any referral traffic report

Sentiment analysis of the context around your brand mention

Cross-platform comparison of your visibility in ChatGPT vs. Perplexity vs. Google AI Overviews

That BrightEdge finding is the single most important data point for understanding why alternative search engine optimization requires different content architecture than traditional SEO. Google's FastSearch and query fan-out technique breaks a user's query into multiple sub-queries, then retrieves top results for each sub-query independently. If your content covers one narrow keyword, you might rank for it traditionally but never get cited in an AI Overview that synthesizes answers from five different sub-queries. Teams investing in content strategies built for AI-driven brand discovery are constructing the topical coverage these retrieval systems prefer.

The Tradeoffs

Dedicated AI monitoring runs between $200 and $2,000+ per month depending on the platform and the number of tracked queries. The data is novel and genuinely useful, but it exists in isolation. You can see that ChatGPT cites you 340 times per month for queries related to your product category. Connecting that visibility to downstream revenue, though, requires manual correlation with your CRM and pipeline data. These tools show you the visibility picture; they don't close the loop to business outcomes.

There's also a maturity issue. The AI monitoring space is young. Tracking methodologies differ between platforms, making apples-to-apples comparisons difficult. I've seen discrepancies of 30% or more in citation counts between two tools monitoring the same queries during the same week.

Hybrid Attribution Models That Merge Both Datasets

The third approach, and the one I'm recommending to most mid-market and enterprise clients right now, combines traditional analytics with AI monitoring into a unified search traffic attribution analytics framework. This requires more setup, but it produces the clearest picture of how search actually drives your business.

The foundation is a modified GA4 configuration. You create distinct channel groupings that separate AI-referred traffic from traditional organic. Gartner predicts traditional search engine volume will drop 25% by 2026, and Adobe reported this week that AI-driven traffic to U.S. retail sites surged 269%, with those visits converting at higher rates than traditional organic sessions. If you're lumping all of that into one "Organic Search" bucket, your AI Overviews metrics tracking is hiding the most important signal in your data.

The implementation looks like this:

Tag AI referral sources in GA4. Contentsquare and similar platforms offer integrations that automatically identify traffic from ChatGPT, Perplexity, Claude, and other AI chatbots. You can also build regex-based channel definitions in GA4 using referral strings that match known AI platform domains.

Layer in AI monitoring data. Export citation and mention data from your AI monitoring tool (weekly or via API) and join it with your GA4 conversion data. The goal is to map citation frequency to changes in branded search volume and direct traffic, which often spike when a brand gets mentioned repeatedly in AI answers even without a click.

Track engagement quality by source. Segment session depth, time on site, and conversion rate by AI-referred vs. traditional organic vs. AI Overview click traffic. Across six client accounts I manage, AI-referred visitors consistently show 15–25% higher conversion rates than traditional organic visitors arriving through the same landing pages.

Monitor topical authority coverage. Use your AI monitoring tool's query fan-out data alongside Search Console queries to identify topical gaps. If ChatGPT cites competitors for sub-queries your content doesn't address, that's a content roadmap signal. Understanding how query fan-out works for AI search optimization makes this step significantly more precise.

The result is an analytics framework that answers three questions simultaneously: Are we visible in AI-generated answers? Does that visibility drive measurable business outcomes? And where should we invest content resources to increase both?

The Tradeoffs

This approach demands cross-functional coordination between your analytics team, your SEO team, and whoever manages your marketing tech stack. If those teams work in silos, choosing the right analytics tools for your specific data needs becomes critical infrastructure rather than a nice-to-have. You'll also need someone comfortable building custom GA4 explorations and maintaining the data joins between platforms. This isn't a set-it-and-forget-it configuration.

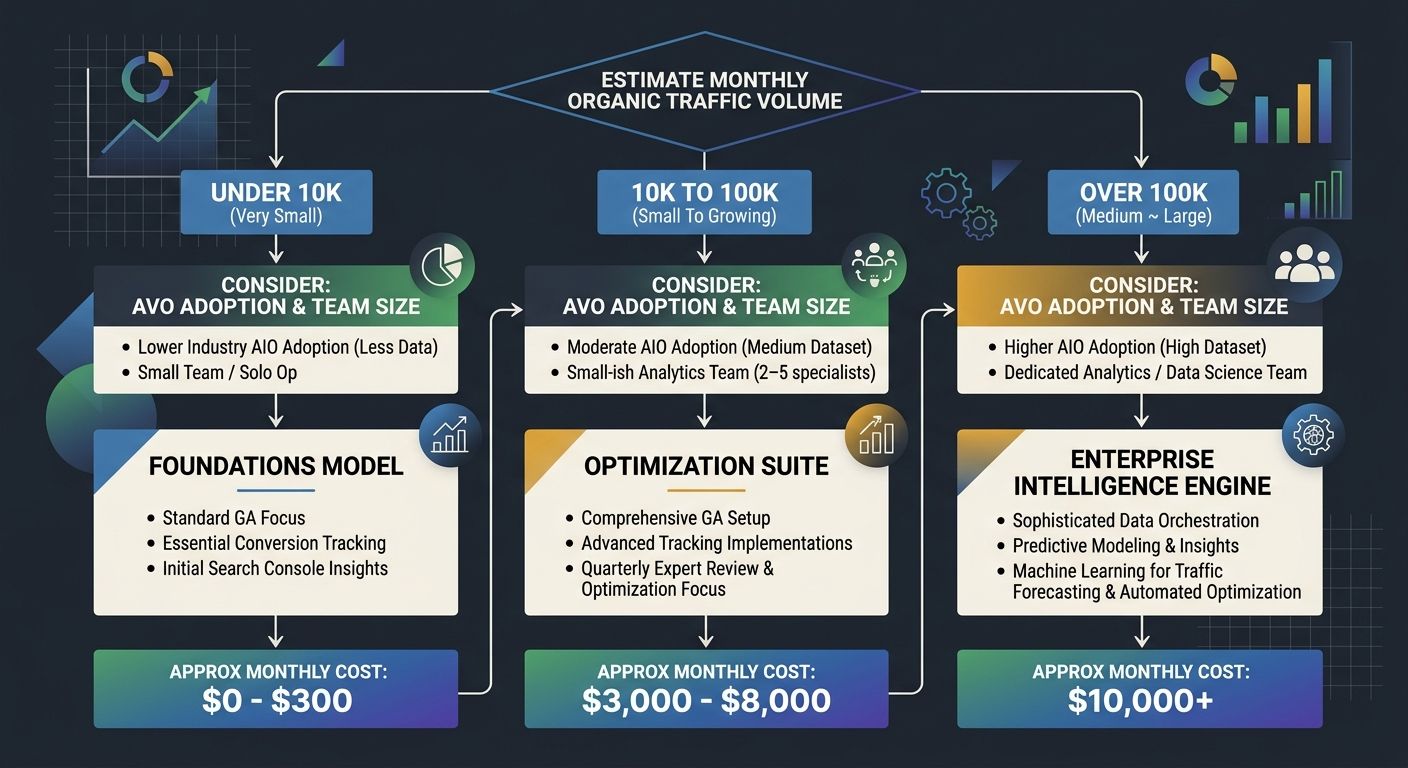

Cost is higher. Between the AI monitoring subscription, any additional tagging infrastructure, and the analyst hours to maintain the model, expect to spend 3–5x what you'd spend on approach two alone. For teams managing significant organic traffic, that cost is trivial relative to the revenue at risk from misattributed search performance.

Who Should Pick Which

The answer depends on three variables: your traffic volume, your organizational analytics maturity, and which industries your content competes in.

If you're a small business or early-stage startup with organic traffic under 10,000 monthly sessions, stick with approach one (traditional GA4 and Search Console) but implement the conversion-focused segmentation I described. Your traffic numbers are small enough that AI-referred visits won't show up as statistically significant patterns yet, and your budget is better spent on content production than monitoring tools.

If you're a mid-market brand or agency managing multiple clients across high-AI-Overview-adoption industries like healthcare, education, B2B tech, or insurance, approach two gives you the fastest time-to-insight. The AI Overviews metrics tracking you get from a dedicated platform will surface visibility gaps that traditional tools completely miss. Budget $300–500 per month per brand as a starting point.

If you're an enterprise with an existing analytics team and organic search drives meaningful pipeline revenue, invest in approach three. The hybrid model is the only framework that closes the loop between AI visibility and business outcomes. It's also the only one that will hold up when your CFO asks why organic-attributed revenue dropped while your SEO team insists rankings haven't moved. If you've run into the disconnect between case study growth claims and what your analytics actually show, a hybrid attribution model is how you resolve it.

The window for treating this as optional is closing fast. AI Overviews appeared on 31% of queries in February 2025. By February 2026, that number hit 48%. If the trajectory holds, we're looking at 60%+ coverage by early 2027, according to Search Engine Journal's analysis of publisher impact. Every month you delay adapting your measurement framework is another month of decisions made on data that describes a search landscape which no longer exists. I'd rather build the measurement infrastructure now, while the data discrepancies are still explainable to leadership, than try to reverse-engineer what happened after a full quarter of pipeline decline that nobody's dashboard predicted.

Alex Chen

Alex Chen is a digital marketing strategist with over 8 years of experience helping enterprise brands and agencies scale their online presence through data-driven campaigns. He has led marketing teams at two successful SaaS startups and specializes in conversion optimization and multi-channel attribution modeling. Alex combines technical expertise with strategic thinking to deliver actionable insights for marketing professionals looking to improve their ROI.

Explore more topics