The SEO Execution Cadence: Building a Repeatable 4-Week Sprint System That Scales

Eight hours of planning per month. That's the documented overhead for a well-run 4-week SEO sprint, according to monday.com's sprint planning research.

The SEO Execution Cadence: Building a Repeatable 4-Week Sprint System That Scales

Eight hours of planning per month. That's the documented overhead for a well-run 4-week SEO sprint, according to monday.com's sprint planning research. Compare that to the 20–40 hours most marketing teams spend in monthly strategy meetings that produce vague quarterly roadmaps and scattered Asana boards. The gap between those two numbers explains why sprint-based SEO teams consistently outperform their waterfall counterparts: they spend less time planning and more time shipping measurable work.

I've run SEO operations both ways across two SaaS companies and a handful of agency engagements. The waterfall approach felt productive because it generated impressive strategy decks. The sprint approach felt chaotic at first because it demanded decisions every week. But the sprint teams produced results at roughly double the velocity, measured by pages shipped, keywords moved, and traffic captured per quarter.

This article documents the specific 4-week SEO execution framework I've refined over three years, including the cadence, the roles, the checkpoints, and the metrics that tell you whether the system is actually working.

Why the Waterfall Model Breaks Down for Organic Growth

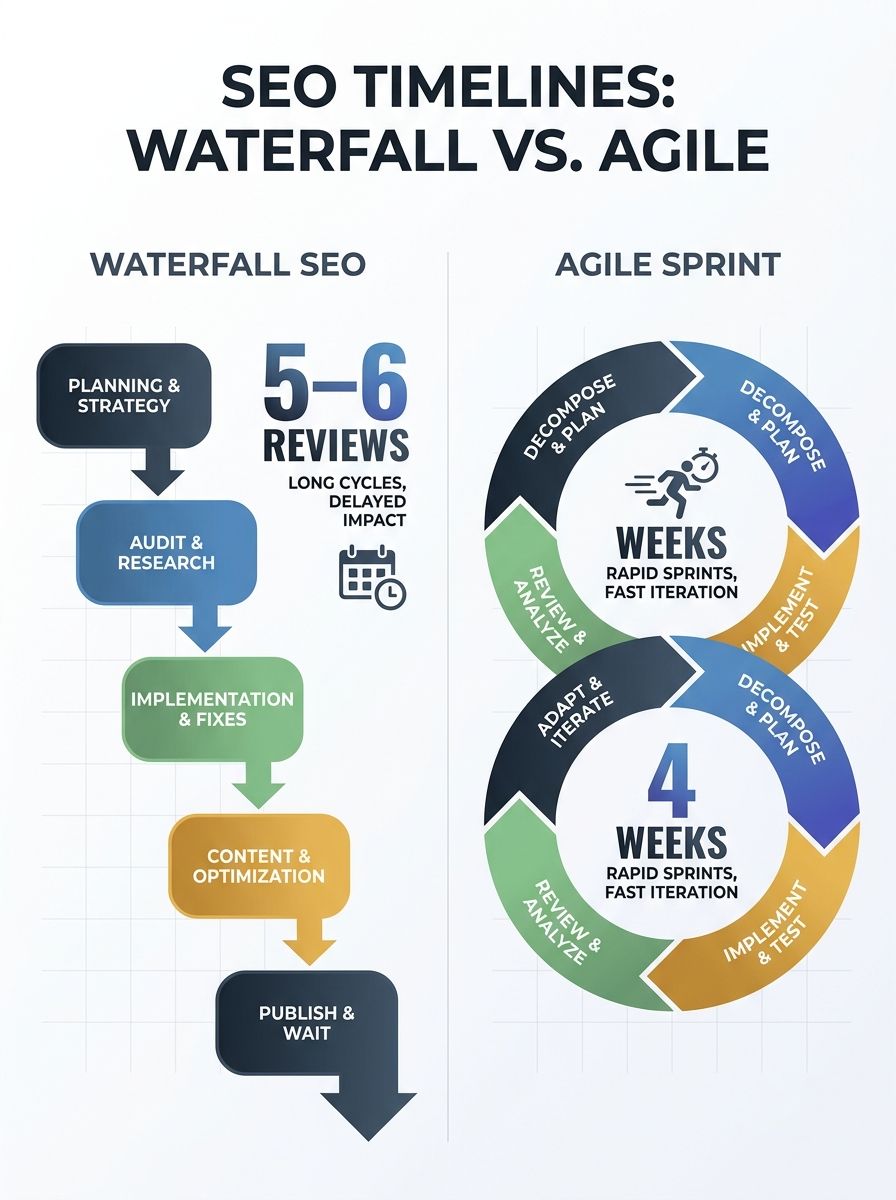

Traditional SEO follows a familiar sequence: research keywords for two weeks, build a content calendar for a month, brief writers over another two weeks, produce content across six to eight weeks, then wait another eight to twelve weeks for ranking signals. By the time you have performance data, the original research is four to five months old. Keyword difficulty has shifted. Competitors have published. Search intent may have evolved entirely.

Agile SEO methodology breaks this into 2–4 week cycles where each cycle includes planning, implementation, tracking results, and refining based on performance. The feedback loop tightens from months to weeks.

The real advantage isn't speed alone. It's that sprint teams can respond to ranking shifts and new keyword opportunities immediately rather than waiting for the next quarterly planning cycle. When Google's algorithm changes or a competitor publishes a definitive guide on your target topic, a sprint team adjusts in the next cycle. A waterfall team adjusts in the next quarter, if they notice at all.

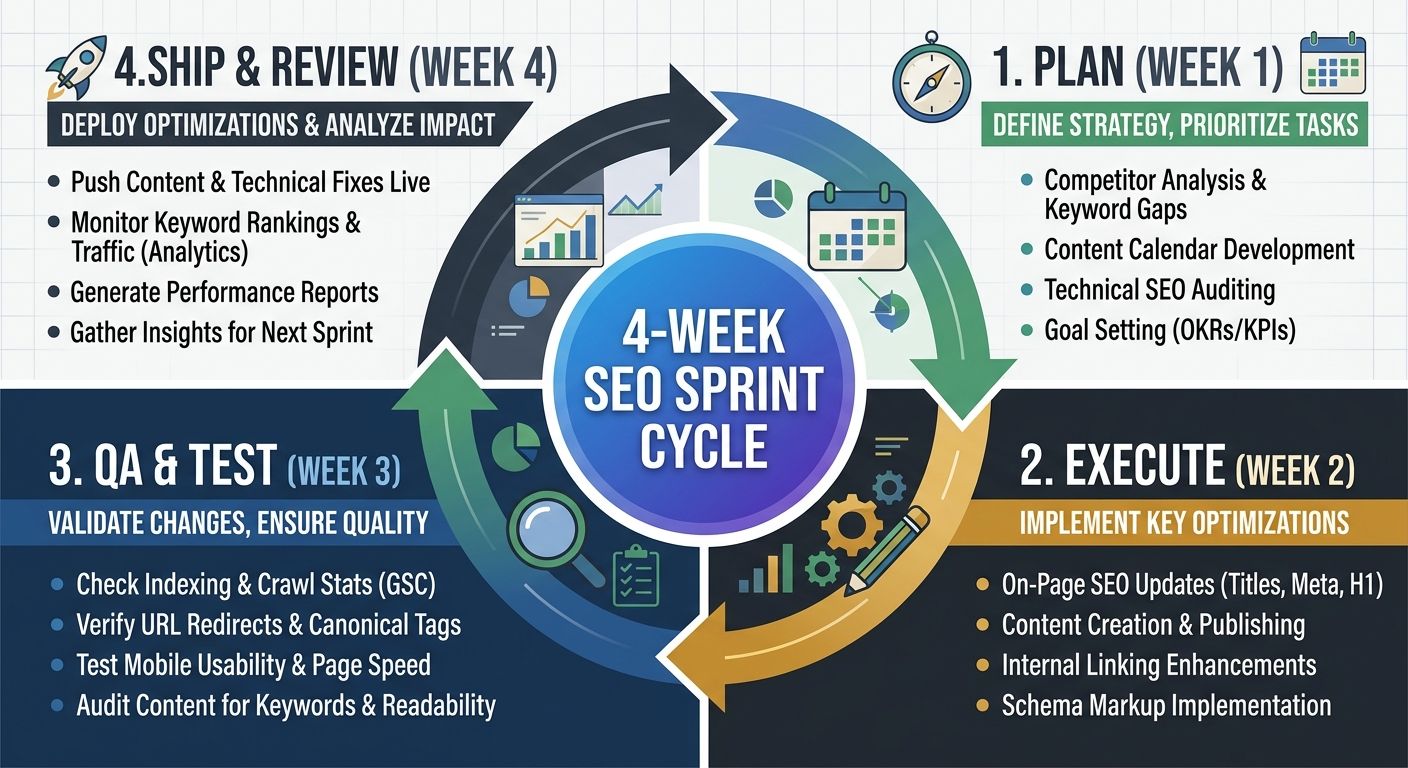

The 4-Week Sprint Structure, Week by Week

I've tested 1-week, 2-week, and 4-week sprint lengths. The 1-week sprint works for technical SEO tasks like fixing crawl errors or updating meta descriptions, but it's too short for content production. The 2-week sprint works for small teams. The 4-week sprint is what scales across content, technical, and link-building workstreams simultaneously.

Here's how the four weeks break down.

Week 1: Sprint Planning and Prioritization (Monday–Friday)

The sprint starts with a planning session capped at four hours. That constraint is deliberate. If you can't prioritize your work in four hours, your backlog isn't organized well enough.

During planning, the SEO lead pulls three data sources: Google Search Console performance data from the previous 28 days, ranking position changes for tracked keywords, and a content gap analysis against top-three competitors for the sprint's focus cluster. The team then selects 8–12 tasks that map to a single sprint goal tied to a business outcome.

A good sprint goal reads like this: "Move six service pages from positions 8–20 into positions 1–5 for their primary keywords." Pages ranking between 8 and 20 represent some of the highest-ROI optimization targets because, based on documented case studies, moving them into the top five can triple their traffic. A bad sprint goal reads: "Improve SEO."

The rest of Week 1 is production kickoff. Writers receive briefs. Developers get technical tickets. The link-building specialist starts outreach.

This planning phase benefits enormously from having a clear keyword prioritization framework already in place, so sprint planning becomes selection from a pre-scored backlog rather than starting from scratch each month.

Week 2: Execution and Content Production

Week 2 is heads-down production. Writers draft content. Developers implement technical changes. The SEO lead reviews briefs before production begins, acting as a quality gate.

The brief approval gate is critical. As monday.com's SEO workflow research documents, coordination between SEO strategists defining opportunities, writers executing content, and designers creating custom assets requires explicit alignment before production starts. Without it, you get content that's well-written but poorly optimized, or technically correct but missing the search intent entirely.

I schedule one 30-minute standup at the end of Week 2 to catch blockers before they cascade into Week 3.

Week 3: QA, Internal Linking, and Midcycle Review

Week 3 is where most SEO teams drop the ball. Content is "done" but not published. Technical tickets are merged but not verified. This is the week that separates teams that ship from teams that accumulate work-in-progress.

Wednesday of Week 3 is QA day. Every piece of content gets checked for on-page optimization, internal linking, schema markup, and Core Web Vitals impact. Every technical change gets verified in staging. The SEO lead runs URL Inspection in Google Search Console to confirm indexability.

Internal linking deserves specific attention here. Each new piece should connect to at least three existing pages in the topic cluster, and those existing pages should link back. If your site architecture doesn't signal topical authority to Google, individual page performance will plateau regardless of content quality.

Alerts should trigger automatically if rankings decline or organic traffic drops for existing pages, creating refresh items that feed into the next sprint's backlog rather than getting lost in a spreadsheet.

Week 4: Publishing, Reporting, and Retrospective

Content publishes Monday through Wednesday of Week 4. Thursday is for compiling the sprint delivery report. Friday is the retrospective.

The delivery report has five sections:

Sprint goal and status (met, partially met, missed)

Items shipped with live URLs

QA checks completed and any issues flagged

Ranking movement for sprint-focus keywords (early signals only, since full impact takes 4–8 weeks)

Backlog recommendations for the next sprint

The retrospective takes 60 minutes. The team answers three questions: What shipped on time and why? What didn't ship and why? What should we change about the process for next sprint?

This cyclical approach to updating existing content follows a workflow triggered by data rather than arbitrary editorial calendars. When teams identify missing elements, outdated statistics, or coverage gaps through their sprint QA process, those items become prioritized backlog entries.

Documented Outcomes from Sprint-Based Execution

The numbers from teams running sprint-based SEO tell a consistent story. One documented case showed a domain rating increase from 2.3 to 14 with backlinks growing from 138 to 401 within 60 days of sprint execution. Another showed a 73% increase in bookings after doubling organic traffic through targeted sprint cycles focused on commercial-intent pages.

These results aren't magic. They're the compound effect of consistent weekly actions: publishing supporting content, refreshing near-ranking pages, building internal links, and collecting structured data about what's working.

The consistency angle matters more than most teams realize. As we've explored in analyzing why consistency metrics outperform publishing frequency, a team that publishes two well-optimized pieces per week for 12 consecutive weeks outperforms a team that publishes eight pieces in week one and nothing for the next three weeks, even when total output is identical.

Scaling the Sprint Across Teams

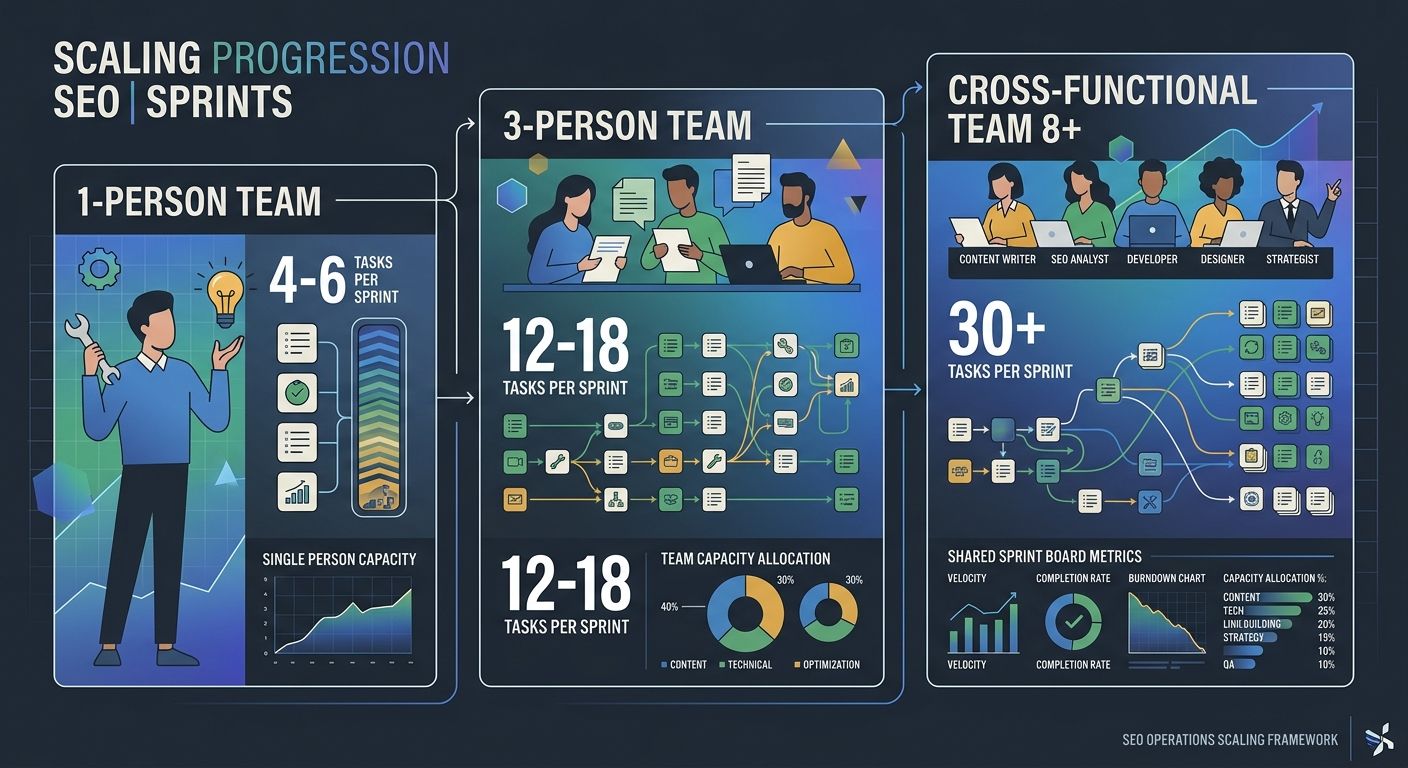

The 4-week sprint works for a solo SEO practitioner or a three-person team. Scaling it to cross-functional teams requires two structural additions.

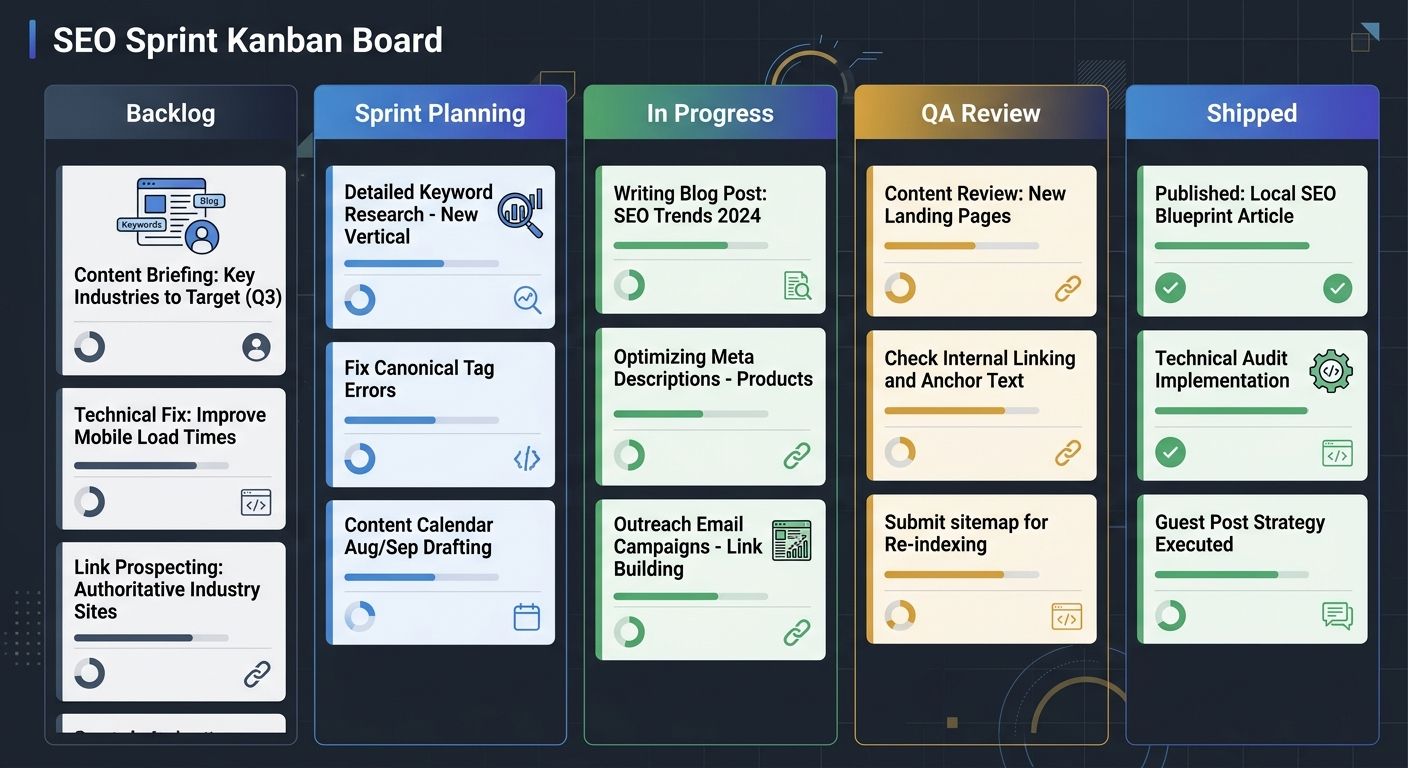

First, you need a shared sprint board visible to content, development, and design. Each team maintains their own task list, but all tasks roll up to the shared sprint goal. When building cross-team SEO workflows, this shared visibility prevents the common failure mode where content ships on time but the developer hasn't implemented the schema markup, or design hasn't created the custom graphics referenced in the brief.

Second, you need sprint-level capacity planning. A four-person content team can realistically produce 6–8 long-form pieces per sprint alongside 3–4 content refreshes. A solo developer can handle 10–15 technical SEO tickets per sprint. Overloading either function means nothing ships completely.

AI tools can absorb some of the repetitive workload. Teams using AI for tasks like metadata drafting, keyword clustering, and automating high-volume SEO workflows report saving approximately four hours per week, which adds up to about five weeks of recovered productivity per year. That recovered time goes into strategic work: analyzing competitors, refining topic clusters, and improving conversion paths on landing pages.

Common Failure Modes I've Observed

Three patterns kill sprint-based SEO execution repeatedly.

The first is scope creep within the sprint. Someone adds "just one more" content piece or technical ticket mid-cycle. That one addition displaces QA time in Week 3, which means content publishes without proper internal linking or schema markup, which means the sprint's ranking impact diminishes. Anything added mid-sprint goes into the backlog for next sprint. No exceptions.

The second is skipping the retrospective because "we already know what happened." You don't. The retrospective surfaces process problems that feel invisible during execution. One team I worked with discovered through retrospectives that their content briefs consistently underestimated word count by 40%, which caused writer delays in every sprint. A simple template change fixed a recurring bottleneck.

The third is measuring sprint success by output rather than outcomes. Shipping 12 pieces of content means nothing if none of them rank. Track the ratio of shipped content that reaches page one within 90 days. If that ratio drops below 30%, the problem is targeting or quality, not velocity.

What The Data Doesn't Tell Us

The documented results from sprint-based SEO are compelling, but several questions remain unanswered by the available data.

We don't have controlled studies comparing identical sites running sprint versus waterfall SEO over 12+ months. The case studies showing DR jumps and traffic increases involve teams that were likely underperforming before adopting sprints, which means some of the improvement reflects moving from disorganized execution to any organized execution, not specifically the sprint format.

We also don't know the long-term sustainability curve. Teams report productivity gains in the first three to six months of sprint adoption, but there's limited data on whether retrospectives continue producing meaningful process improvements after 18 or 24 months, or whether teams plateau and the ritual becomes performative.

And the AI productivity gains of four hours per week assume specific task types and team configurations. Teams with highly specialized content requirements or regulated industries may see less benefit from AI-assisted metadata and clustering because every output requires manual review against compliance standards.

The sprint system I've described here works. The documented outcomes support it, and my own experience across multiple teams confirms the pattern. But treating any framework as settled science invites the kind of rigidity that sprints were designed to prevent. Run the system, measure what it produces for your specific team, and adjust. The retrospective exists for exactly this reason.

Alex Chen

Alex Chen is a digital marketing strategist with over 8 years of experience helping enterprise brands and agencies scale their online presence through data-driven campaigns. He has led marketing teams at two successful SaaS startups and specializes in conversion optimization and multi-channel attribution modeling. Alex combines technical expertise with strategic thinking to deliver actionable insights for marketing professionals looking to improve their ROI.

Explore more topics